Harness Blog

Featured Blogs

Today, Harness is announcing the General Availability of Artifact Registry, a milestone that marks more than a new product release. It represents a deliberate shift in how artifact management should work in secure software delivery.

For years, teams have accepted a strange reality: you build in one system, deploy in another, and manage artifacts somewhere else entirely. CI/CD pipelines run in one place, artifacts live in a third-party registry, and security scans happen downstream. When developers need to publish, pull, or debug an artifact, they leave their pipelines, log into another tool, and return to finish their work.

It works, but it’s fragmented, expensive, and increasingly difficult to govern and secure.

At Harness, we believe artifact management belongs inside the platform where software is built and delivered. That belief led to Harness Artifact Registry.

A Startup Inside Harness

Artifact Registry started as a small, high-ownership bet inside Harness and a dedicated team with a clear thesis: artifact management shouldn’t be a separate system developers have to leave their pipelines to use. We treated it like a seed startup inside the company, moving fast with direct customer feedback and a single-threaded leader driving the vision.The message from enterprise teams was consistent: they didn’t want to stitch together separate tools for artifact storage, open source dependency security, and vulnerability scanning.

So we built it that way.

In just over a year, Artifact Registry moved from concept to core product. What started with a single design partner expanded to double digit enterprise customers pre-GA – the kind of pull-through adoption that signals we've identified a critical gap in the DevOps toolchain.

Today, Artifact Registry supports a broad range of container formats, package ecosystems, and AI artifacts, including Docker, Helm (OCI), Python, npm, Go, NuGet, Dart, Conda, and more, with additional support on the way. Enterprise teams are standardizing on it across CI pipelines, reducing registry sprawl, and eliminating the friction of managing diverse artifacts outside their delivery workflows.

One early enterprise customer, Drax Group, consolidated multiple container and package types into Harness Artifact Registry and achieved 100 percent adoption across teams after standardizing on the platform.

As their Head of Software Engineering put it:

"Harness is helping us achieve a single source of truth for all artifact types containerized and non-containerized alike making sure every piece of software is verified before it reaches production." - Jasper van Rijn

Why This Matters: The Registry as a Control Point

In modern DevSecOps environments, artifacts sit at the center of delivery. Builds generate them, deployments promote them, rollbacks depend on them, and governance decisions attach to them. Yet registries have traditionally operated as external storage systems, disconnected from CI/CD orchestration and policy enforcement.

That separation no longer holds up against today’s threat landscape.

Software supply chain attacks are more frequent and more sophisticated. The SolarWinds breach showed how malicious code embedded in trusted update binaries can infiltrate thousands of organizations. More recently, the Shai-Hulud 2.0 campaign compromised hundreds of npm packages and spread automatically across tens of thousands of downstream repositories.

These incidents reveal an important business reality: risk often enters early in the software lifecycle, embedded in third-party components and artifacts long before a product reaches customers.When artifact storage, open source governance, and security scanning are managed in separate systems, oversight becomes fragmented. Controls are applied after the fact, visibility is incomplete, and teams operate in silos. The result is slower response times, higher operational costs, and increased exposure.

We saw an opportunity to simplify and strengthen this model.

By embedding artifact management directly into the Harness platform, the registry becomes a built-in control point within the delivery lifecycle. RBAC, audit logging, replication, quotas, scanning, and policy enforcement operate inside the same platform where pipelines run. Instead of stitching together siloed systems, teams manage artifacts alongside builds, deployments, and security workflows. The outcome is streamlined operations, clearer accountability, and proactive risk management applied at the earliest possible stage rather than after issues surface.

Introducing Dependency Firewall: Blocking Risk at Ingest

Security is one of the clearest examples of why registry-native governance matters.

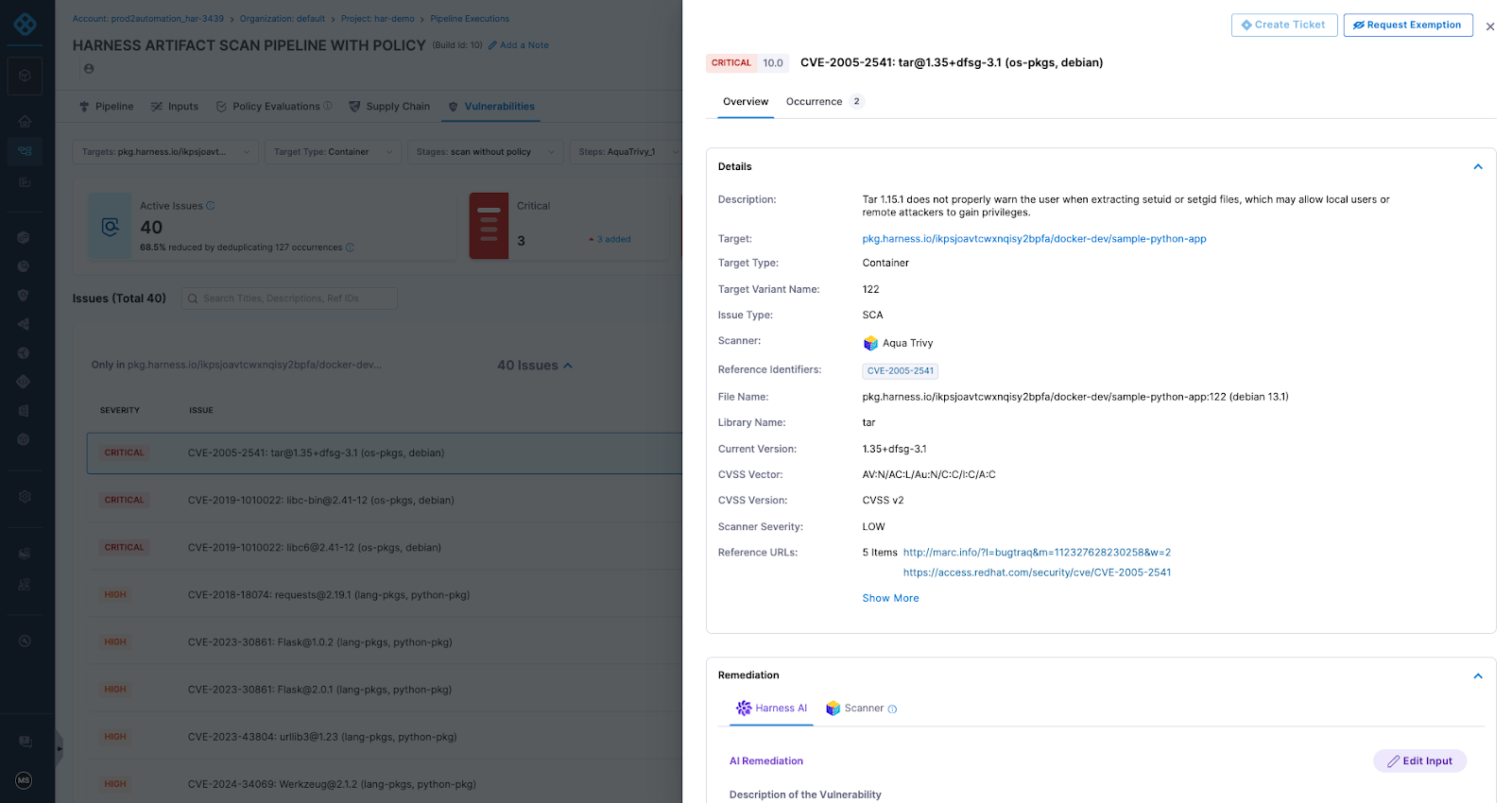

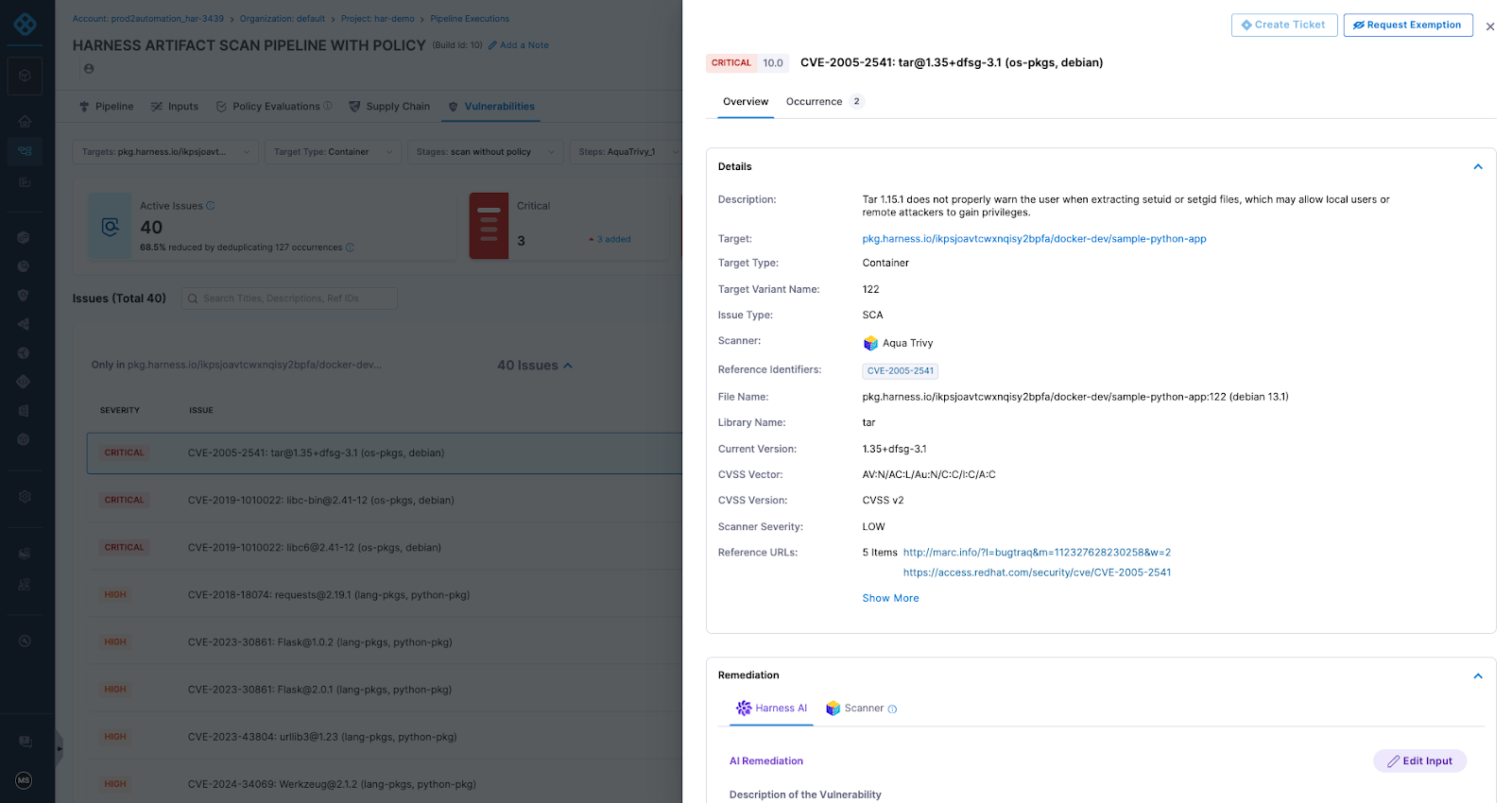

Artifact Registry delivers this through Dependency Firewall, a registry-level enforcement control applied at dependency ingest. Rather than relying on downstream CI scans after a package has already entered a build, Dependency Firewall evaluates dependency requests in real time as artifacts enter the registry. Policies can automatically block components with known CVEs, license violations, excessive severity thresholds, or untrusted upstream sources before they are cached or consumed by pipelines.

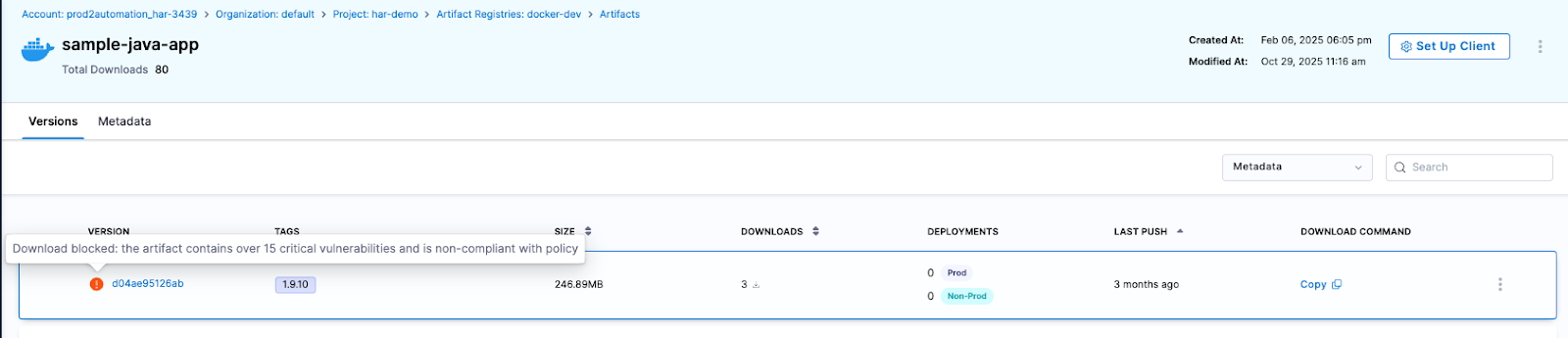

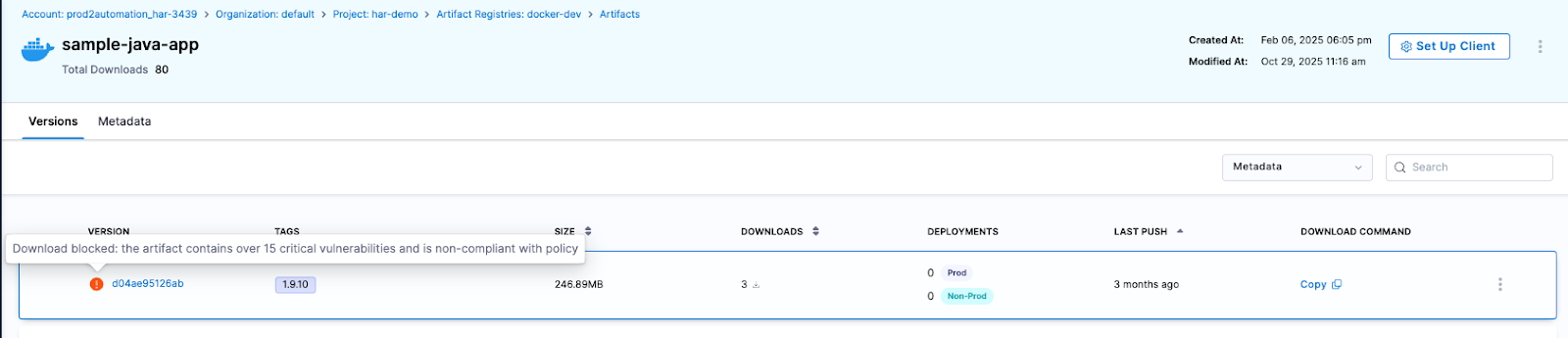

Artifact quarantine extends this model by automatically isolating artifacts that fail vulnerability or compliance checks. If an artifact does not meet defined policy requirements, it cannot be downloaded, promoted, or deployed until the issue is addressed. All quarantine and release actions are governed by role-based access controls and fully auditable, ensuring transparency and accountability. Built-in scanning powered by Aqua Trivy, combined with integrations across more than 40 security tools in Harness, feeds results directly into policy evaluation. This allows organizations to automate release or quarantine decisions in real time, reducing manual intervention while strengthening control at the artifact boundary.

The result is a registry that functions as an active supply chain control point, enforcing governance at the artifact boundary and reducing risk before it propagates downstream.

The Future of Artifact Management is here

General Availability signals that Artifact Registry is now a core pillar of the Harness platform. Over the past year, we’ve hardened performance, expanded artifact format support, scaled multi-region replication, and refined enterprise-grade controls. Customers are running high-throughput CI pipelines against it in production environments, and internal Harness teams rely on it daily.

We’re continuing to invest in:

- Expanded package ecosystem support

- Advanced lifecycle management, immutability, and auditing

- Deeper integration with Harness Security and the Internal Developer Portal

- AI-powered agents for OSS governance, lifecycle automation, and artifact intelligence

Modern software delivery demands clear control over how software is built, secured, and distributed. As supply chain threats increase and delivery velocity accelerates, organizations need earlier visibility and enforcement without introducing new friction or operational complexity.

We invite you to sign up for a demo and see firsthand how Harness Artifact Registry delivers high-performance artifact distribution with built-in security and governance at scale.

TLDR: We have rolled out Project Movement: the ability to transfer entire Harness projects between Organizations with a few clicks. It's been our most-requested Platform feature for a reason. Your pipelines, configurations, and rest come along for the ride.

What are Projects and Organizations?

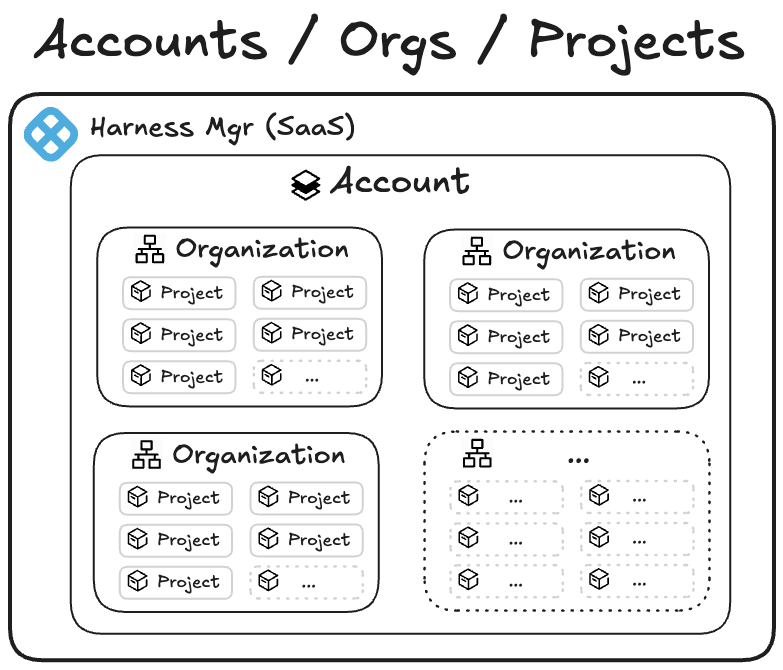

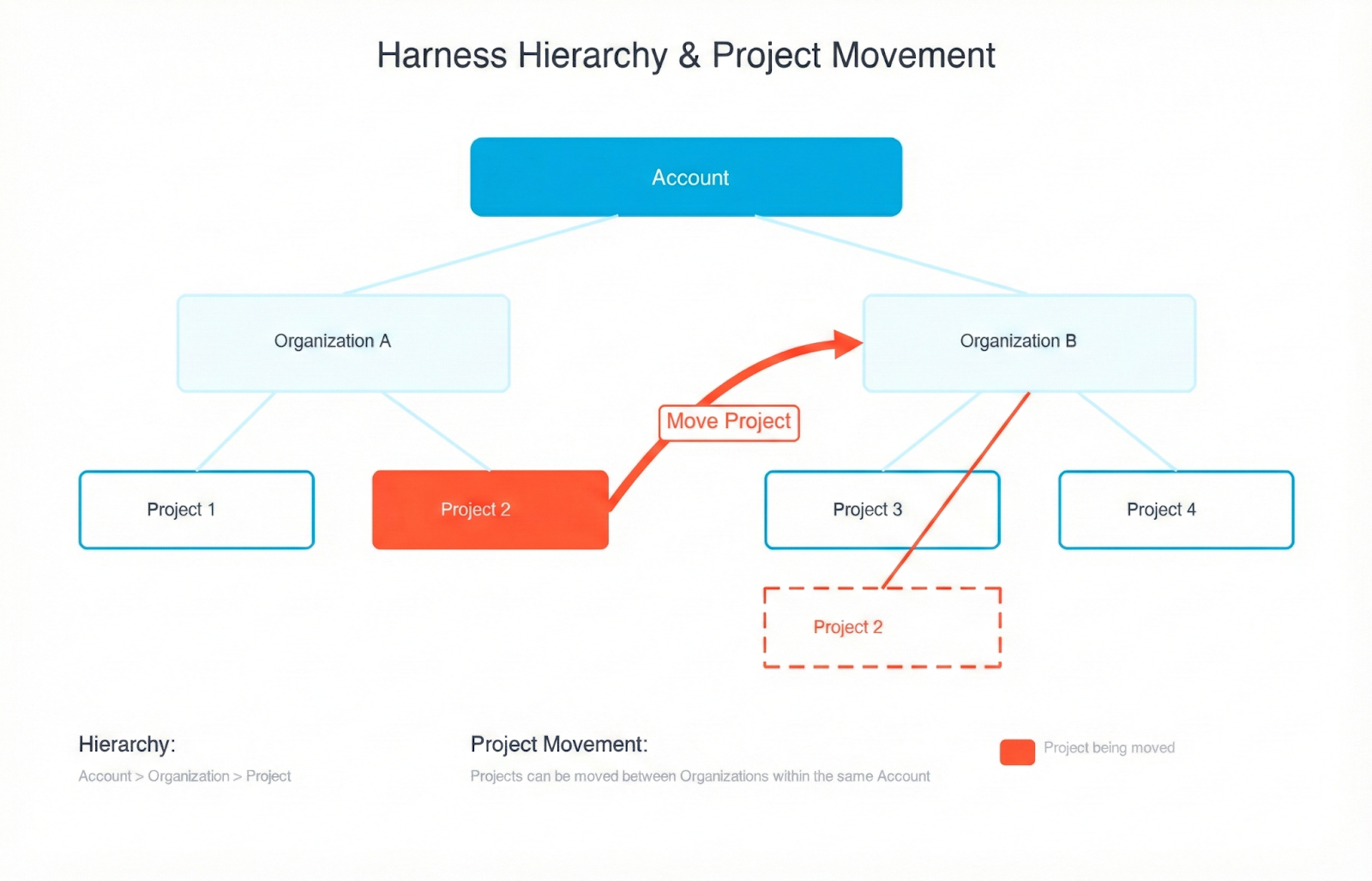

In Harness, an Account is the highest-scoped entity. It contains organizations and projects. An organization is the space that represents your business unit or team and helps you manage users, access, and shared settings in one place. Within an organization, a project is where your teams do their day-to-day work, such as building pipelines, managing services, and tracking deployments. Projects keep related resources grouped together, making it easier to collaborate, control permissions, and scale across teams.

The main benefit of keeping organizations and projects separate is strong isolation and predictability. By not allowing projects to move between organizations, you can ensure that each organization serves as a rigid boundary for security, RBAC, governance, billing, and integrations. Customers could trust that once a project was created within an org, all its permissions, secrets, connectors, audit history, and compliance settings would remain stable and wouldn’t be accidentally inherited or lost during a move. This reduced the risk of misconfiguration, privilege escalation, broken pipelines, or compliance violations — especially for large enterprises with multiple business units or regulated environments.

However, imagine this scenario: last quarter, your company reorganized around customer segments. This quarter, two teams merged. Next quarter, who knows—but your software delivery shouldn't grind to a halt every time someone redraws the org chart.

We've heard this story from dozens of customers: the experimental project that became critical, the team consolidation that changed ownership, the restructure that reshuffled which team owns what. And until now, moving a Harness project from one Organization to another meant one thing: start from scratch.

Not anymore.

That’s why we have rolled out Project Movement—the ability to transfer entire Harness projects between Organizations with a few clicks. It's been our most-requested Platform feature for a reason. Your pipelines, configurations, and rest come along for the ride.

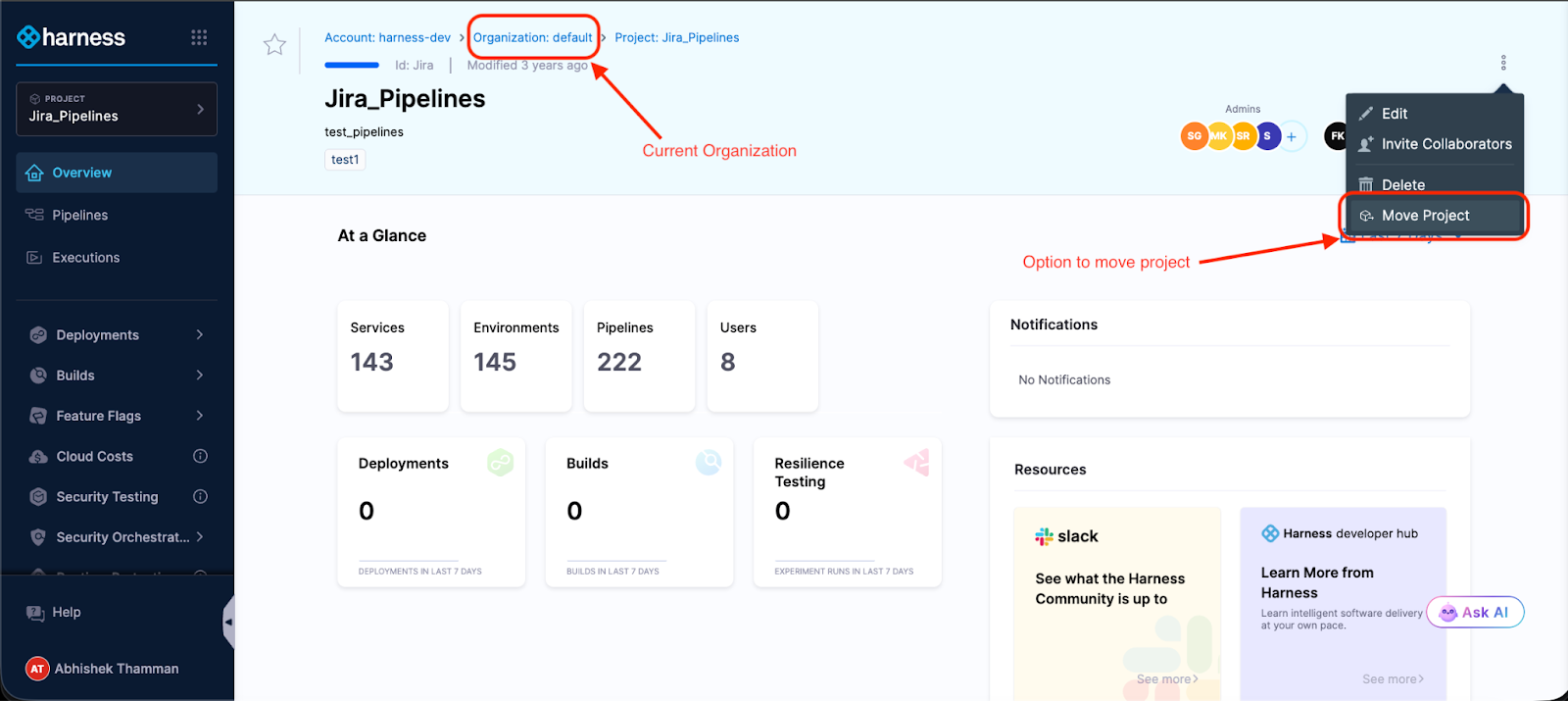

What Moving a Project Actually Feels Like

You're looking at 47 pipelines, 200+ deployment executions, a dozen services, and countless hours of configuration work. The company's org chart says this project now belongs to a different team. Deep breath.

Click the menu. Select "Move Project." Pick your destination Organization.

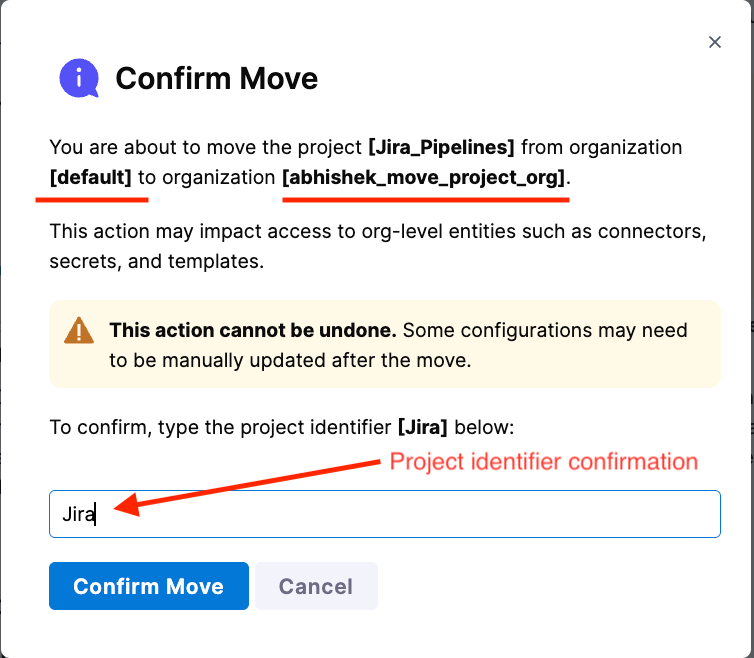

The modal shows you what might break—Organization-level connectors, secrets, and templates that the project references. Not an exhaustive list, but enough to know what you're getting into.

Type the project identifier to confirm.

Done.

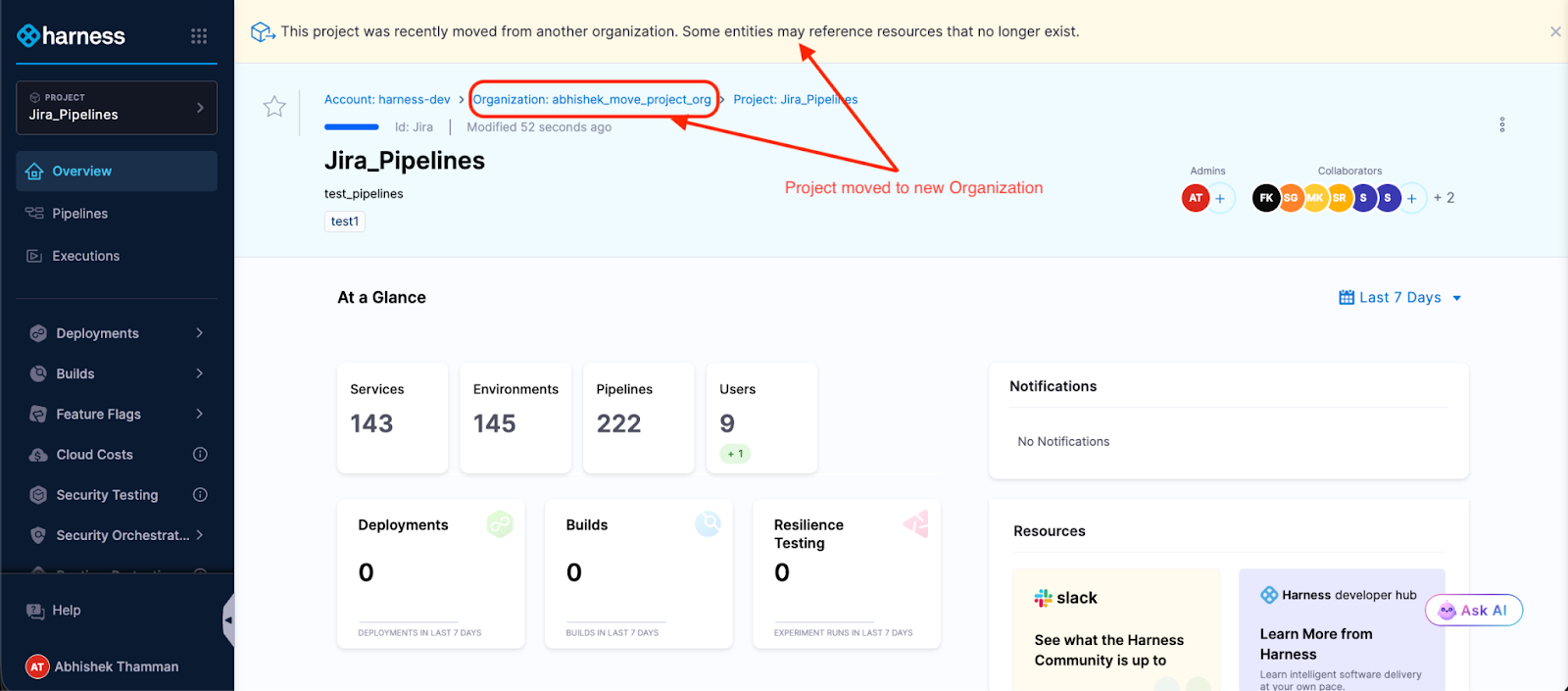

Your project is now in its new Organization. Pipelines intact. Execution history preserved. Templates, secrets, connectors—all right where you left them. The access control migration happens in the background while you grab coffee.

What used to take days of YAML wrangling and "did we remember to migrate X?" conversations now takes minutes.

To summarize:

To move a Harness project between organizations:

1. Open the project menu and select “Move Project.”

2. Choose the destination organization.

3. Review impacted organization-level resources.

4. Confirm by typing the project identifier.

5. Monitor access control migration while pipelines remain intact.

What Moves with Projects

Here's what transfers automatically when you move a project:

- Platform - Your pipelines with their full execution history, all triggers and input sets, services and environments, project-scoped connectors, secrets, templates, delegates, and webhooks.

- Continuous Delivery (CD) - All your deployment workflows, service definitions, and infrastructure configurations. If you built it for continuous delivery, it moves.

- Continuous Integration (CI) - Build configurations, test intelligence settings, and the whole CI setup.

- Internal Developer Portal (IDP) - Service catalog entries and scorecards.

- Security Test Orchestration (STO) - Scan configurations and security testing workflows.

- Code Repository - Repository settings, configurations, and more.

- Database DevOps - Database schema management configurations and more.

Access control follows along too: role bindings, service accounts, user groups, and resource groups. This happens asynchronously, so the move doesn't block, but you can track progress in real-time.

The project becomes immediately usable in its new Organization. No downtime, no placeholder period, no "check back tomorrow."

What doesn’t Move with Projects?

Let's talk about what happens to Organization-level resources and where you'll spend some time post-move.

Organization-scoped resources don't move—and that makes sense when you think about it. That GitHub connector at the Organization level? It's shared across multiple projects. We can't just yank it to the new Organization. So after moving, you'll update references that pointed to:

- Organization-level connectors (GitHub, Docker Hub, cloud providers)

- Organization-level secrets (API keys, credentials)

- Organization-level templates (shared pipeline components)

- User groups inherited from the source Organization

After the move, you'll update these references in your pipelines and configurations. Click the resource field, select a replacement from the new Organization or create a new one, and save. Rinse and repeat. The pre-move and post-move guide walks through the process.

A few CD features aren't supported yet, but on the roadmap: GitOps entities, and Continuous Verification don't move with the project. If your pipelines use these, you'll need to manually reconfigure them in the new Organization after the move. The documentation has specific guidance on supported modules and entities.

Security Boundaries Stay Intact

The Harness hierarchical model, Account > Organization > Project, exists for strong isolation and predictable security boundaries. Moving projects doesn't compromise that architecture. Here's why: Organization-level resources stay put. Your GitHub connectors, cloud credentials, and secrets remain scoped to their Organizations. When a project moves, it doesn't drag sensitive org-wide resources along; it references new ones in the destination. This means your security boundaries stay intact, RBAC policies remain predictable, and teams can't accidentally leak credentials across organizational boundaries. The project moves. The isolation doesn't.

An Example of Moving Projects

A platform engineering team had a familiar problem: three different product teams each had their own Harness Organization with isolated projects. Made sense when the teams were autonomous. But as the products matured and started sharing infrastructure, the separation became friction.

The platform team wanted to consolidate everything under a single "Platform Services" Organization for consistent governance and easier management. Before project movement, that meant weeks of work—export configurations, recreate pipelines, remap every connector and secret, test everything, hope nothing broke.

With project movement, they knocked it out in an afternoon. Move the projects. Update references to Organization-level resources. Standardize secrets across the consolidated projects. Test a few deployments. Done.

The product teams kept shipping. The platform team got its unified structure. Nobody lost weeks to migration work.

Try It (With Smart Guardrails)

Moving a project requires two permissions: Move on the source project and Create Project in the destination Organization. Both sides of the transfer need to agree—you can't accidentally move critical projects out of an Organization or surprise a team with unwanted projects.

When you trigger a move, you'll type the project identifier to confirm.

A banner sticks around for 7 days post-move, reminding you to check for broken references. Use that week to methodically verify everything, especially if you're moving a production project.

Our recommendation: Try it with a non-production project first. Get a feel for what moves smoothly and what needs attention. Then tackle the production stuff with confidence.

Why This Took Time (A Peek Behind the Scenes)

On the surface, moving a project sounds simple-just change where it lives, and you’re done. But in reality, a Harness project is a deeply connected system.

Your pipelines, execution history, connectors, secrets, and audit logs are all tied together behind the scenes. Historically, Harness identified these components using their specific "address" in the hierarchy. That meant if a project moved, every connected entity would need its address updated across multiple services at the same time. Doing that safely without breaking history or runtime behavior was incredibly risky.

To solve this, we re-architected the foundation.

We stopped tying components to their location and introduced stable internal identifiers. Now, every entity has a unique ID that travels with it, regardless of where it lives. When you move a project, we simply update its parent relationship. The thousands of connected components inside don’t even realize they’ve moved.

This architectural shift is what allows us to preserve your execution history and audit trails while keeping project moves fast and reliable.

What's Coming

This is version one. The foundations are solid: projects move, access control migrates, pipelines keep running. But we're not done.

We're listening. If you use this feature and hit rough edges, we want to hear about it.

The Bottom Line

Organizational change is inevitable. The weeks of cleanup work afterward don't have to be.

Project Movement means your Harness setup can adapt as fast as your org chart does. When teams move, when projects change ownership, when you consolidate for efficiency, your software delivery follows without the migration overhead.

No more lost history. No more recreated pipelines. No more week-long "let's just rebuild everything in the new Organization" projects.

Ready to try it? Check out the step-by-step guide or jump into your Harness account and look for "Move Project" in the project menu.

At Harness, our story has always been about change — helping teams ship faster, deploy safer, and control the blast radius of every modification to production. Deployments, feature flags, pipelines, and governance are all expressions of how organizations evolve their software.

Today, the pace of change is accelerating. As AI-assisted development becomes the norm, more code reaches production faster, often without a clear link to the engineer who wrote it. Now, Day 2 isn’t just supporting the unknown – it’s supporting software shaped by changes that may not have a clear human owner.

And as every SRE and on-call engineer knows, even rigorous change hygiene doesn’t prevent incidents because real-world systems don’t fail neatly. They fail under load, at the edges, in the unpredictable ways software meets traffic patterns, caches, databases, user behavior, and everything in between.

When that happens, teams fall back on what they’ve always relied on: Human conversation and deep understanding of what changed.

That’s why today we’re excited to introduce the Harness Human-Aware Change Agent — the first AI system designed to treat human insight as operational data and use it to drive automated, change-centric investigation during incidents.

Not transcription plus RCA. One unified intelligence engine grounded in how incidents actually unfold.

📞 A Quick Look at Harness AI SRE

The Human-Aware Change Agent is part of Harness AI SRE — a unified incident response system built to help teams resolve incidents faster without scaling headcount. AI SRE brings together the critical parts of response: capturing context, coordinating action, and operationalizing investigation.

At the center is the AI Scribe, because the earliest and most important clues in an incident often surface in conversation before they appear in dashboards. Scribe listens across an organization’s tools with awareness of the incident itself – filtering out unrelated chatter and capturing only the decisions, actions, and timestamps that matter. The challenge isn’t producing a transcript; it’s isolating the human signals responders actually use.

Those signals feed directly into the Human-Aware Change Agent, which drives change-centric investigation during incidents.

And once that context exists, AI SRE helps teams act on it: Automation Runbooks standardize first response and remediation, while On-Call and Escalations ensure incidents reach the right owner immediately.

AI SRE also fits into the tools teams already run — with native integrations and flexible webhooks that connect observability, alerting, ticketing, and chat across systems like Datadog, PagerDuty, Jira, ServiceNow, Slack, and Teams.

🌐 Why We Built a Human-Aware Change Agent

Most AI approaches to SRE assume incidents can be solved entirely through machine signals — logs, metrics, traces, dashboards, anomaly detectors. But if you’ve ever been on an incident bridge, you know that’s not how reality works.

Some of the most important clues come from humans:

- “The customer said the checkout button froze right after they updated their cart.”

- “Service X felt slow an hour before this started.”

- “Didn’t we flip a flag for the recommender earlier today?”

- “This only happens in the US-East cluster.”

These early observations shape the investigation long before anyone pulls up a dashboard.

Yet most AI tools never hear any of that.

The Harness Human-Aware Change Agent changes this. It listens to the same conversations your engineers are having — in Slack, Teams, Zoom bridges — and transforms the human story of the incident into actionable intelligence that guides automated change investigation.

It is the first AI system that understands both what your team is saying and what your systems have changed — and connects them in real time.

🔍 How the Human-Aware Change Agent Works

1. It listens and understands human context.

Using AI Scribe as its conversational interface, the agent captures operational signals from a team’s natural dialogue – impacted services, dependencies, customer-reported symptoms, emerging theories or contradictions, and key sequence-of-events clues (“right before…”).

The value is in recognizing human-discovered clues, and converting them into signals that guide next steps.

2. It investigates changes based on those clues.

The agent then uses these human signals to direct investigation across your full change graph including deployments, feature flags or config changes, infrastructure updates, and ITSM change records – triangulating what engineers are seeing with what is actually changing in your production environment.

3. It surfaces evidence-backed hypotheses.

Instead of throwing guesses at the team, it produces clear, explainable insights:

“A deployment to checkout-service completed 12 minutes before the incident began. That deploy introduced a new retry configuration for the payment adapter. Immediately afterward, request latency started climbing and downstream timeouts increased.”

Each hypothesis comes with supporting data and reasoning, allowing teams to quickly validate or discard theories.

4. It helps teams act faster and safer

By uniting human observations with machine-driven change intelligence, the agent dramatically shortens the path from:

What are we seeing? → What changed? → What should we do?

Teams quickly gain clarity on where to focus, what’s most suspicious, and which rollback or mitigation actions exist and are safest.

🌅 A New Era of Incident Response

With this release, Harness is redefining what AI for incident management looks like.

Not a detached assistant. Not a dashboard summarizer. But a teammate that understands what responders are saying, investigates what systems have changed, connects the dots, and helps teams get to truth faster.

Because the future of incident response isn’t AI working alone. It’s AI working alongside engineers — understanding humans and systems in equal measure.

Book a demo of Harness AI SRE to see how human insight and change intelligence come together during real incidents.

Latest Blogs

Knowledge Graphs: The Backbone of AI-First Software Delivery

---

Key Takeaways

- AI-generated code fails without a real delivery context

- Knowledge graphs turn fragmented DevSecOps data into operational truth

- Platform teams must move from AI-assisted to AI-operational DevOps

- Overmodeling kills ROI faster than missing data

- Context must be fresh, permissioned, and use-case driven

---

AI can generate code in seconds. It still can’t ship software safely.

That gap isn’t about model quality or prompt engineering. It’s about context, and most software organizations don’t have a system that accurately reflects how pipelines, services, environments, policies, and teams actually relate to each other.

Without that context, AI doesn’t automate delivery. It amplifies risk.

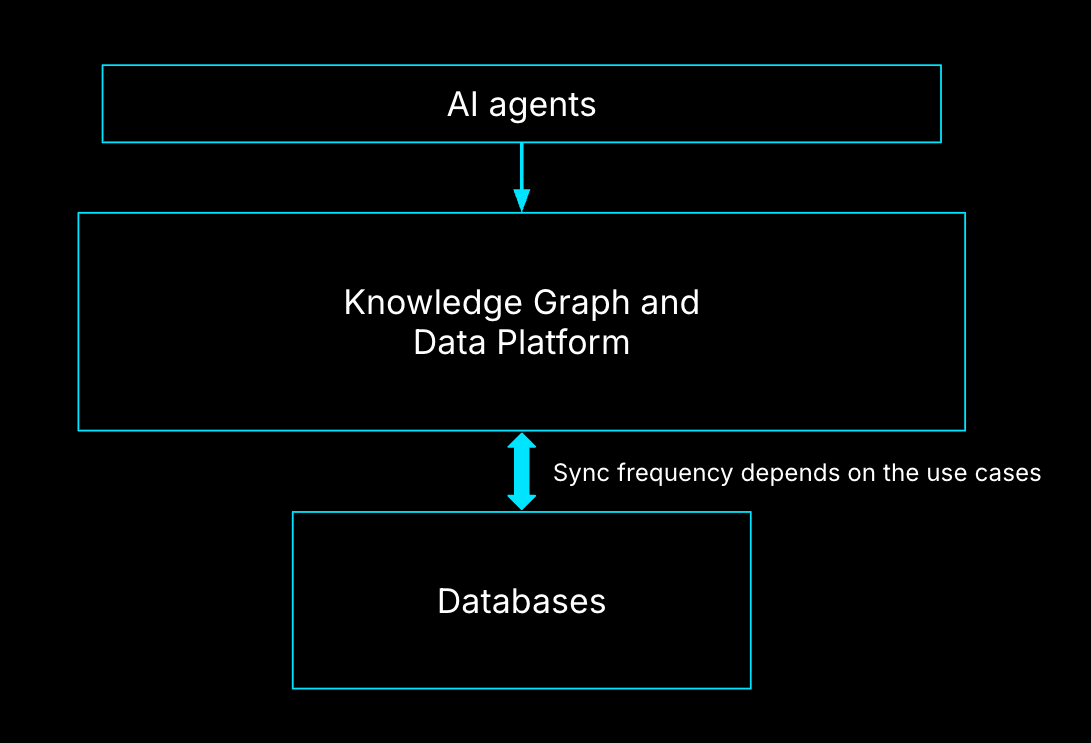

I am responsible for building the Knowledge Graph that powers Harness AI, and I see this every day, working on AI infrastructure and data platforms at Harness, and it’s a recurring theme. AI-first delivery fails not because of intelligence, but because of fragmentation.

AI Is Not the Bottleneck, Context Is

Modern engineering organizations already generate more data than any human can reason about:

- CI pipelines

- Deployment workflows

- Cloud environments

- Security policies

- Cost signals

- Incident data

Each system works. The problem is that none of them agree on what the system actually is.

When something breaks, we don’t query systems. We page people. That’s the clearest signal you’ve hit the context bottleneck. When your organization depends on a few humans to resolve incidents, you don’t have a tooling problem. You have a context problem.

From AI-Assisted DevOps to AI-Operational DevOps

Most teams today operate in AI-assisted DevOps:

- AI helps write code

- AI generates pipelines

- AI summarizes logs

That’s helpful, but shallow.

AI-operational DevOps is different. Here, AI doesn’t just assist tasks. It understands how software actually moves from commit to production, including constraints, dependencies, and governance.

The difference is a platform problem. Without a shared context layer, AI remains a collection of point optimizations. With one, it becomes an operator.

What “Context” Actually Means in Software Delivery

Context is not dashboards. It’s not a data lake. And it’s definitely not another CMDB.

In practice, context means entities and relationships.

Core entities that matter

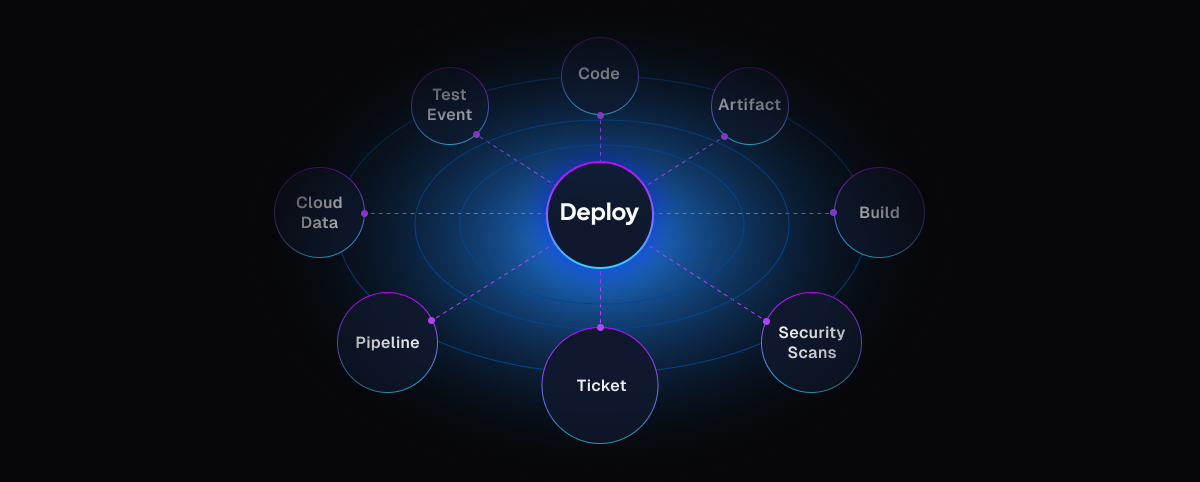

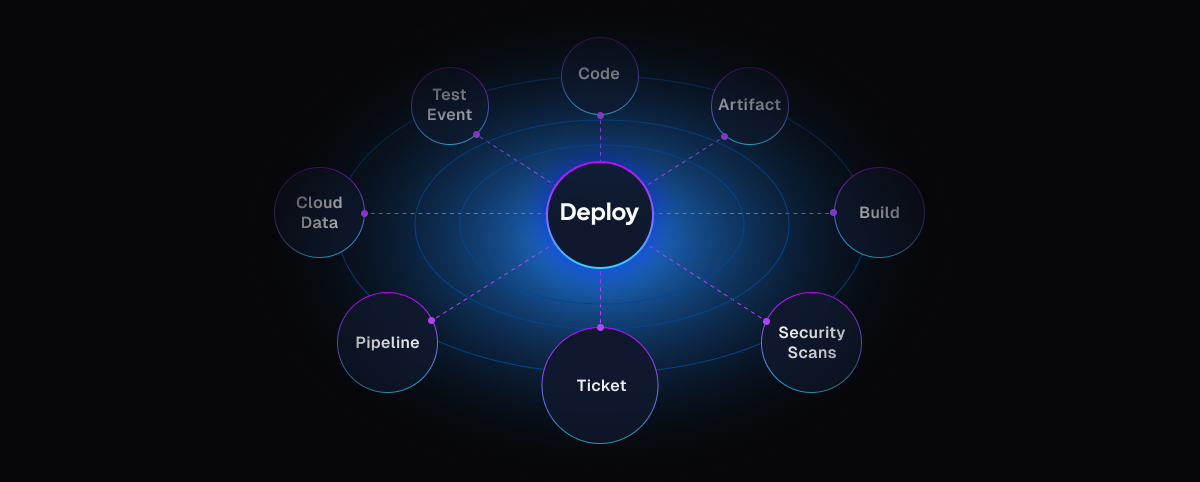

In DevSecOps environments, the most critical entities are:

- Pipelines (the workflow backbone)

- Services and artifacts

- Environments and clusters

- Policies and approvals

- Identities and permissions

Pipelines are often the natural center — not because they’re special, but because they express intent.

Relationships are where value emerges

A pipeline alone isn’t context.

A pipeline links to:

- the cluster it deploys to

- The policies it must pass

- The identity running it

- the services it affects

That's the operational truth.

This is why knowledge graphs matter. They don’t store more data; they preserve meaning.

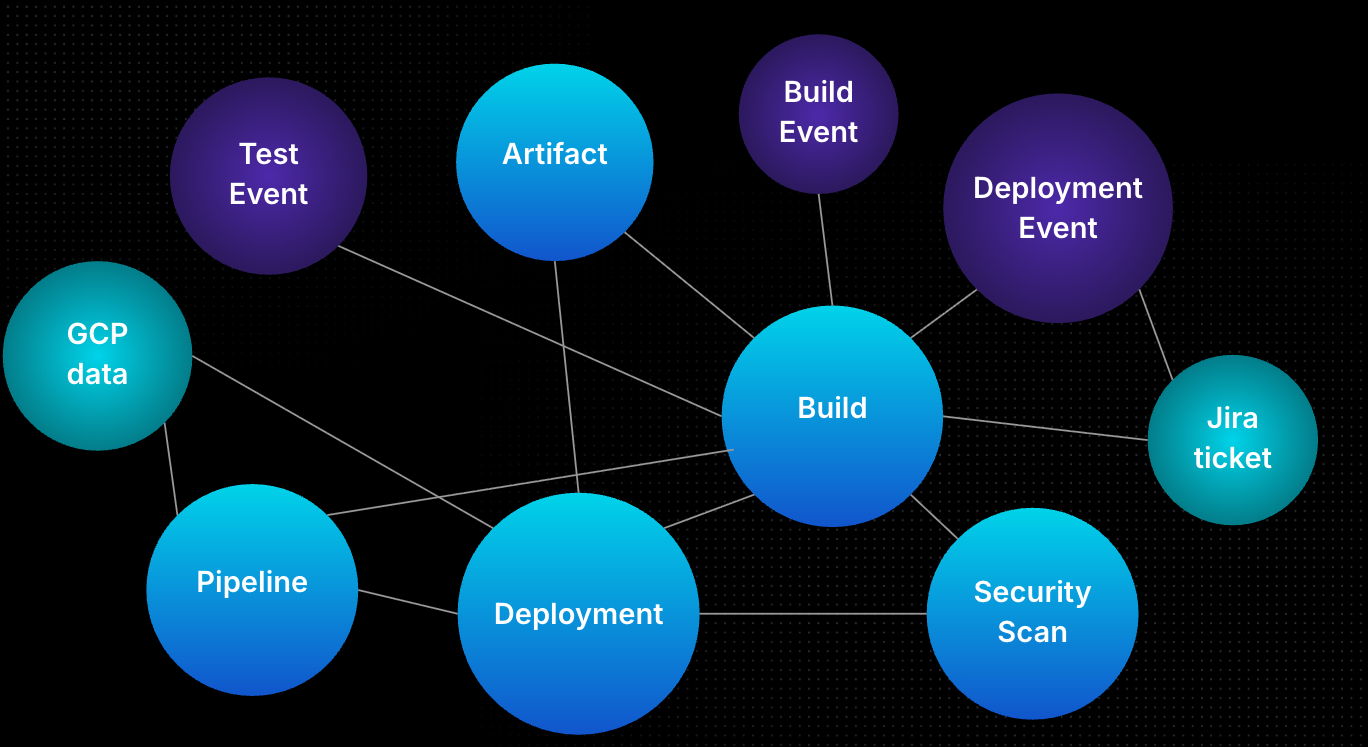

Why Knowledge Graphs Fail in Practice

I’ve seen three failure modes repeat across organizations:

- Overmodeling: Teams model everything before solving anything. The graph becomes academic and unused.

- Undermodeling: Teams skip key relationships, then wonder why AI gives shallow or incorrect answers.

- Stale context: Perfectly modeled data that’s a week old is useless during an outage.

A knowledge graph only works when it’s use-case driven, minimal, and fresh.

The Minimum Viable Knowledge Graph

The fastest way to see value is not breadth, it’s focus. Start with one use case that cannot be solved by a single system.

A strong starting point:

- Root cause analysis for failed pipelines

To support that, you need:

- Git metadata

- CI/CD execution state

- Environment and access data

That’s often fewer than 10 entities. Everything else is enrichment, not day one requirements.

Freshness, Not Completeness, Determines Value

AI agents don’t need perfect context. They need the current context.

For delivery workflows, near real-time synchronization is often mandatory. When a deployment fails, an engineer doesn’t want last month’s answer; they want why it failed now. This is why the semantic layer matters. AI agents should interact with meaning, not raw tables.

Guardrails by Default: Policies, RBAC, and Trust

AI agents must be treated as extensions of humans, not superusers.

That means:

- RBAC applies to agents exactly as it does to people

- Policies define what context can be surfaced

- Least privilege is non-negotiable

At Harness, Policy as Code and native policy agents ensure AI can’t bypass governance — even when it’s acting autonomously.

How to Measure Whether Context Is Working

You don’t measure a knowledge graph by node count. You measure it by outcomes.

Four metrics matter:

- Answer quality (often validated using a secondary model)

- Human validation (does this reduce toil?)

- Evaluation over time (across models and versions)

- Cost efficiency (context without ROI is technical debt)

If context doesn’t improve decisions, it’s noise.

A Real AI-Driven Deployment Example

Imagine a developer says, in natural language: “Deploy this service to QA and production.”

Behind the scenes, an AI agent:

- Identifies the service and repo

- Generates a pipeline aligned with org standards

- Inserts approvals, security scans, and policies

- Validates access to target environments

If the pipeline fails, the same graph enables automated remediation:

- Was access revoked?

- Did a policy change?

- Did the artifact differ?

That’s not automation. That’s operational reasoning.

Why Cost, Rollbacks, and Incidents Demand Graph Context

Traditional dashboards tell you what happened. Knowledge graphs tell you why.

Cost spikes only make sense when linked to:

- deployment decisions

- environment selection

- service dependencies

Rollbacks are only safe when dependency graphs are understood. Rolling back a service without knowing the upstream and downstream impact is how outages cascade.

Guidance to Get Started (and What to Avoid)

Do this:

- Pick 1–2 high-impact use cases

- Model only what’s required

- Iterate through the semantic layer first

Avoid this:

- Modeling your entire SDLC upfront

- Treating the graph as a data dump

- Chasing completeness over usefulness

Context is a product, not a schema.

Conclusion

AI-first software delivery doesn’t fail because models aren’t smart enough.

It fails because platforms don’t understand themselves.

Knowledge graphs give AI the one thing it can’t generate on its own: context grounded in reality, thus making them the primary pillar in AI-first software delivery context.

Ready to see how Harness operationalizes AI with real delivery context?

Explore Harness CI/CD and the AI-native software delivery platform.

FAQs

What’s the difference between observability and a knowledge / context graph?

Observability shows what’s happening. Knowledge/Context graphs explain what it means.

Do knowledge graphs replace existing tools?

No. They connect them.

Who owns the knowledge graph?

Everyone: platform, SRE, security, and application teams.

Is this only for large enterprises?

No. Smaller teams benefit faster because tribal knowledge is thinner.

Can AI work without a knowledge graph?

Yes, but only at the task level, not the system level.

Securing AI and Securing With AI: AI Security from Code to Runtime With Harness

AI is changing both what you build and how you build it - at the same time. Today, Harness is announcing two new products to secure both: AI Security, a new product to discover, test, and protect AI running in your applications, and Secure AI Coding, a new capability of Harness SAST that secures the code your AI tools are writing. Together, they further extend Harness's DevSecOps platform into the age of AI, covering the full lifecycle from the first line of AI-generated code to the models running in production.

In November, Harness published our State of AI-Native Application Security report, a survey of hundreds of security and engineering leaders on how AI-native applications are changing your threat surface. The findings were stark: 61% of new applications are now AI-powered, yet most organizations lack the tools to discover what AI models and agents exist in their environments, test them for vulnerabilities unique to AI, or protect them at runtime. The attack surface has expanded dramatically — but the tools to defend it haven't kept up.

The picture is equally concerning on the development side. Our State of AI in Software Engineering report found that 63% of organizations are already using AI coding assistants - tools like Claude Code, Cursor, and Windsurf - to write code faster. But faster isn't safer. AI-generated code has the same vulnerabilities as human-written code, but now with larger and more frequent commits. AppSec programs that were already stretched thin are now breaking under the volume and velocity.

The result is a blind spot on both sides of the AI equation - what you're building, and what you're building with. Today, Harness is closing that gap.

What Makes Harness Different?

Most security vendors are stuck in their lane. Shift-left tools catch vulnerabilities in code before they reach production. Runtime protection tools block attacks after applications are deployed. And the two rarely talk to each other.

Harness was built on a different premise: real DevSecOps means connecting every stage of the software delivery lifecycle, and closing the loop between what you find in production and what you fix in code.

That's what the Harness platform does today. Application Security Testing brings SAST and SCA directly into the development workflow, surfacing vulnerabilities where they're faster and cheaper to fix. SCS ensures the integrity of artifacts from build to deploy, while STO provides a unified view of security posture — along with policy and governance — across the entire organization.

As code ships to production, Web Application & API Protection monitors and defends applications and APIs in real time, detecting and blocking attacks as they happen. And critically, findings from runtime don't disappear into a security team's backlog — they flow back to developers to address root causes before the next release.

The result is a closed loop: find it in code, protect it in production, fix it fast. All on a single, unified platform.

Today, we're extending that loop into AI - on both sides. AI is reshaping what you build and how you build it simultaneously. A platform that can only address one side of that equation leaves you exposed on the other. Harness closes both gaps.

Introducing AI Security

In the State of AI-Native Application Security, 66% of respondents said they are flying blind when it comes to securing AI-native apps. 72% call shadow AI a gaping chasm in their security posture. 63% believe AI-native applications are more vulnerable than traditional IT applications. They’re right to be concerned.

Harness AI Security is built on the foundation of our API security platform. Every LLM call, every MCP server, every AI agent communicating with an external service does so via APIs. Your AI attack surface isn't separate from your API attack surface; it's an expansion of it. AI threats introduce new vectors like prompt injection, model manipulation, and data poisoning on top of the API vulnerabilities your teams already contend with. There is no AI security without API security.

.png)

With the launch of AI Security, we are introducing AI Discovery in General Availability (GA). AI security starts where API security starts: discovery. You can't assess or mitigate risk from AI components you don't know exist. Harness already continuously monitors your environment for new API endpoints the moment they're deployed. Recognizing LLMs, MCP servers, AI agents, and third-party GenAI services like OpenAI and Anthropic is a natural extension of that. AI Discovery automatically inventories your entire AI attack surface in real time, including calls to external GenAI services that could expose sensitive data, and surfaces runtime risks, such as unauthenticated APIs calling LLMs, weak encryption, or regulated data flowing to external models.

Beyond discovering and inventorying your AI application components, we are also introducing AI Testing and AI Firewall in Beta, extending AI Security across the full discover-test-protect lifecycle.

.png)

AI Testing actively probes your LLMs, agents, and AI-powered APIs for vulnerabilities unique to AI-native applications, including prompt injection, jailbreaks, model manipulation, data leakage, and more. These aren't vulnerabilities that a traditional DAST tool is designed to find. AI Testing was purpose-built for AI threats, continuously validating that your models and the APIs that expose them behave safely under adversarial conditions. It integrates directly into your existing CI/CD pipelines, so AI-specific security testing becomes part of every release — not a one-time audit.

.png)

AI Firewall actively protects your AI applications from AI-specific threats, such as the OWASP Top 10 for LLM Applications. It inspects and filters LLM inputs and outputs in real time, blocking prompt injection attempts, preventing sensitive data exfiltration, and enforcing behavioral guardrails on your models and agents before an attack can succeed. Unlike traditional WAF rules that require manual tuning for every new threat pattern, AI Firewall understands AI-native attack vectors natively, adapting to the evolving tactics attackers use against generative AI.

Harness AI Security with AI Discovery is now available in GA, while AI Testing and AI Firewall are available in Beta.

Introducing Secure AI Coding

"As AI-assisted development becomes standard practice, the security implications of AI-generated code are becoming a material blind spot for enterprises. IDC research indicates developers accept nearly 40% of AI-generated code without revision, which can allow insecure patterns to propagate as organizations increase code output faster than they expand validation and governance, widening the gap between development velocity and application risk."

— Katie Norton, Research Manager, DevSecOps, IDC

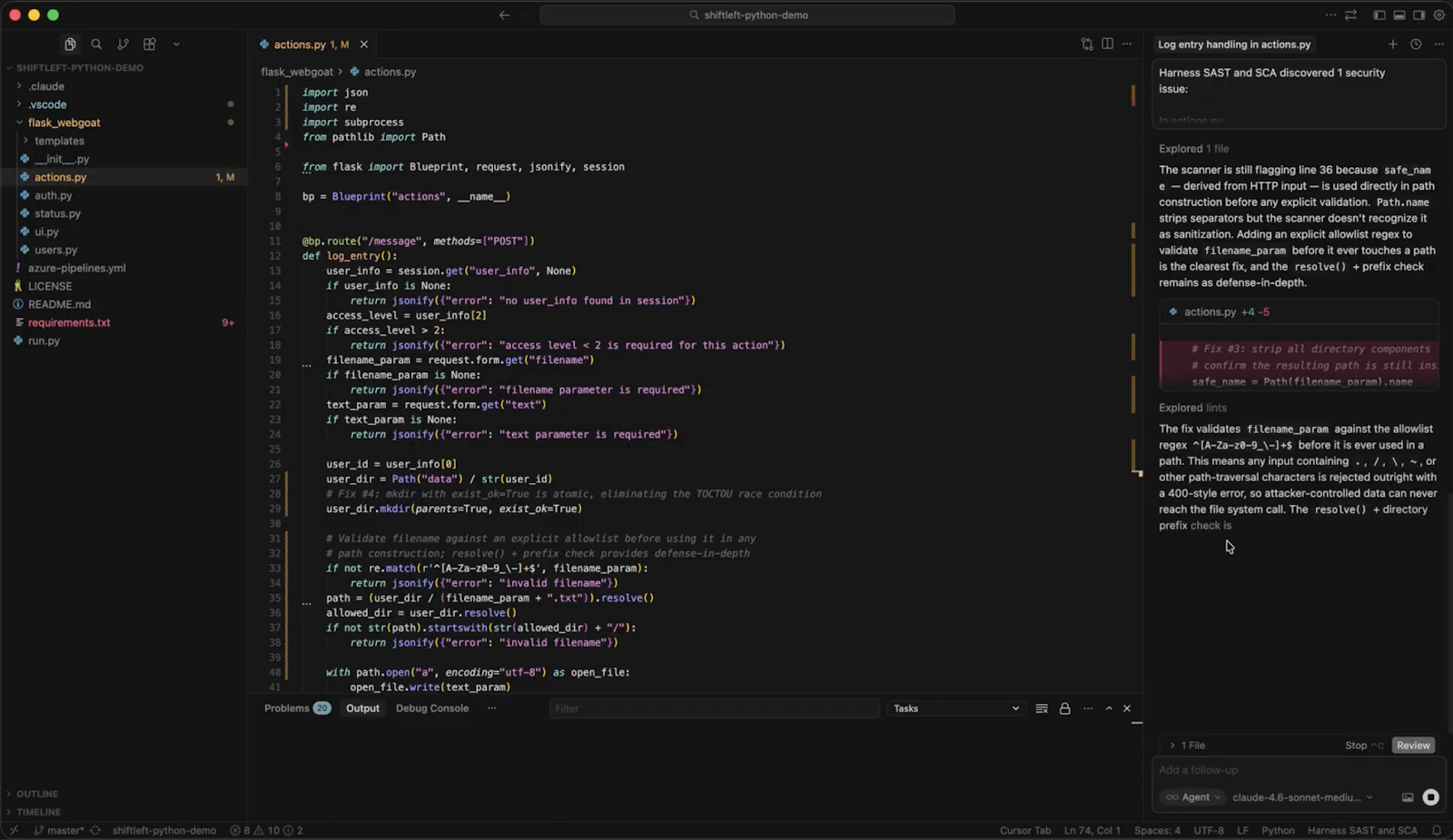

AI Security addresses the risks inside your AI-native applications. Secure AI Coding addresses a different problem: the vulnerabilities your AI tools are introducing into your codebase.

Developers are generating more code than ever, and shipping it faster than ever. AI coding assistants now contribute to the majority of new code at many organizations — and nearly half (48%) of security and engineering leaders are concerned about the vulnerabilities that come with it. AI-generated code arrives in larger commits, at higher frequency, and often with less review than human-written code would receive.

SAST tools catch vulnerabilities at the PR stage — but by then, AI-generated code has already been written, reviewed, and often partially shipped. Harness SAST's new Secure AI Coding capability moves the security check earlier to the moment of generation, integrating directly with AI coding tools like Cursor, Windsurf, and Claude Code to scan code as it appears in the IDE. Developers never leave their workflow. They see a vulnerability warning inline, alongside a prompt to send the flagged code back to the agent for remediation — all without switching tools or even needing to trigger a manual scan.

"Security shouldn't be an afterthought when using AI dev tools. Our collaboration with Harness kicks off vulnerability detection directly in the developer workflow, so all generated code is screened from the start." — Jeff Wang, CEO, Windsurf

What sets Secure AI Coding apart from simpler linting tools is what happens beneath the surface. Rather than pattern-matching the AI-generated code in isolation, it leverages Harness's Code Property Graph (CPG) to trace how data flows through the entire application - before, through, and after the AI-generated code in question. That means Secure AI Coding can surface complex vulnerabilities like injection flaws and insecure data handling that only become visible in the context of the broader codebase. The result is security that understands your application - not just the last thing an AI assistant wrote.

We Had the Same Problem

When we deployed AI across our own platform, our AI ecosystem grew faster than our visibility into it. We needed a way to track every API call, identify sensitive data exposure, and monitor calls to external vendors — including OpenAI, Vertex AI, and Anthropic — without slowing down our engineering teams.

Deploying AI Security turned that black box into a transparent, manageable environment. Some milestones from our last 90 days:

- We now track 111 AI assets and monitor over 4.76 million monthly API calls, giving our security team a granular, real-time map of our entire AI attack surface.

- We now run 2,500 AI testing scans a week and have remediated 92% of the issues found, including critical weak authentication and encryption gaps in MCP tools.

- We identified and blocked 1,140 unique threat actors attempting more than 14,900 attacks against our AI infrastructure.

The shift wasn't just operational — it was cultural. We moved from reactive monitoring to proactive defense. As our team put it: "Securing AI is foundational for us. Because our own product runs on AI, it must be resilient and secure. We use our own AI Security tools to ensure that every innovation we ship is backed by the highest security standards."

Ready to Secure Your AI?

AI is moving fast. Your attack surface is expanding in two directions at once - inside the applications you're building, and inside the code your teams are generating to build them.

Harness AI Security and Secure AI Coding are available now. Whether you're trying to get visibility into the AI running in your environment, test it for vulnerabilities before attackers do, or stop insecure AI-generated code from reaching production, Harness’ platform is ready.

Talk to your account team about AI Security. Get a live walkthrough of AI Discovery, AI Testing, and AI Firewall, and see how your AI attack surface maps against your existing API security posture.

Already a Harness CI customer? Start a free trial of Harness SAST - including Secure AI Coding. Connect it to your AI coding assistant, and see what's shipping in your AI-generated code today.

The Agent-Native Repo: Why AGENTS.MD is the New Standard

This is part 1 of a five-part series on building production-grade AI engineering systems.

Across this series, we will cover:

- How to make your repository agent-native

- How to prevent context decay in long AI sessions

- How to orchestrate tools, subagents, and external systems

- How to survive the multi-model reality with gateway layers

- How to measure and enforce quality with AI evals

Most teams experimenting with AI coding agents focus on prompts.

That is the wrong starting point.

Before you optimize how an agent thinks, you must standardize what it sees.

AI agents do not primarily fail because of reasoning limits. They fail because of environmental ambiguity. They are dropped into repositories designed exclusively for humans and expected to infer structure, conventions, workflows, and constraints from scattered documentation.

If AI agents are contributors, then the repository itself must become agent-native.

The foundational step is introducing a standardized instruction layer that every agent can read.

That layer is AGENTS.md.

The Real Problem: Context Silos

Every coding agent needs instructions. Where those instructions live depends on the tool.

One IDE reads from a hidden rules directory.

Another expects a specific markdown file.

Another uses proprietary configuration.

This fragmentation creates three systemic problems.

1. Tool-dependent prompt locations

Instructions are locked into IDE-specific paths. Change tools and you lose institutional knowledge.

2. Tribal knowledge never gets committed

When a developer discovers the right way to guide an agent through a complex module, that guidance often lives in chat history. It never reaches version control. It never becomes part of the repository’s operational contract.

3. Inconsistent agent behavior

Two engineers working on the same codebase but using different agents receive different outputs because the instruction surfaces are different.

The repository stops being the single source of truth.

For human collaboration, we solved this decades ago with READMEs, contribution guides, and ownership files. For AI collaboration, we are only beginning to standardize.

What AGENTS.md Is

AGENTS.md is a simple, open, tool-agnostic format for providing coding agents with project-specific instructions. It is now part of the broader open agentic ecosystem under the Agentic AI Foundation, with broad industry adoption.

It is not a replacement for README.md. It is a complement.

Design principle:

- README.md is for humans.

- AGENTS.md is for agents.

Humans need quick starts, architecture summaries, and contribution policies.

Agents need deterministic build commands, exact test execution steps, linter requirements, directory boundaries, prohibited patterns, and explicit assumptions.

Separating these concerns provides:

- A predictable location for agent instructions

- Cleaner, human-focused READMEs

- Reduced duplication

- Cross-tool compatibility

Several major open source repositories have already adopted AGENTS.md. The pattern is spreading because it addresses a real structural gap.

Recent evaluations have also shown that explicit repository-level agent instructions outperform loosely defined “skills” systems in practical coding scenarios. The implication is clear. Context must be explicit, not implied.

A Real Example: OpenAI’s Agents SDK

A practical example of this pattern can be seen in the OpenAI Agents Python SDK repository.

The project contains a root-level AGENTS.md file that defines operational instructions for contributors and AI agents working on the codebase. You can view the full file here.

Instead of leaving workflows implicit, the repository encodes them directly into agent-readable instructions. For example, the file requires contributors to run verification checks before completing changes:

Run `$code-change-verification` before marking work complete.It also explicitly scopes where those rules apply, such as changes to core source code, tests, examples, or documentation within the repository.

Rather than expecting an agent to infer these processes from scattered documentation, the project defines them as explicit instructions inside the repository itself.

This is the core idea behind AGENTS.md.

Operational guidance that would normally live in prompts, chat history, or internal knowledge becomes version-controlled infrastructure.

Designing an Effective Root AGENTS.md

A root AGENTS.md should be concise. Under 300 lines is a good constraint. It should be structured, imperative, and operational.

A practical structure includes four required sections.

1. Project Overview

This section establishes the mental model.

Include:

- Project purpose and high-level architecture

- Directory structure and key components

- Technology stack and critical dependencies

Agents are pattern matchers. The clearer the structural map, the fewer incorrect assumptions they make.

2. Build, Test, and Push Instructions

This section must be precise.

Include:

- Exact build commands

- Test execution commands

- Linter and formatting requirements

- Pre-push validation steps

Avoid vague language. Replace “run tests” with explicit commands.

Agents execute what they are told. Precision reduces drift.

3. Development Workflow

This section defines conventions.

Rather than bloating AGENTS.md, reference a separate coding standards document for:

- Naming conventions

- Logging patterns

- Security requirements

- Repository-specific architectural constraints

The root file should stay focused while linking to deeper guidance.

4. Common Pitfalls and Prohibited Patterns

This is where most teams underinvest.

Document:

- Anti-patterns specific to the codebase

- Deprecated APIs

- Incorrect assumptions agents commonly make

- Areas where public APIs must not change

Agents tend to repeat statistically common patterns. Your codebase may intentionally diverge from those patterns. This section is where you enforce that divergence.

Think of this as defensive programming for AI collaboration.

Hierarchical AGENTS.md: Scaling Context Correctly

Large repositories require scoped context.

A single root file cannot encode all module-specific constraints without becoming noisy. The solution is hierarchical AGENTS.md files.

Structure example:

root/

AGENTS.md

module-a/

AGENTS.md

module-b/

AGENTS.md

sub-feature/

AGENTS.mdAgents automatically read nested AGENTS.md files when operating inside those directories. Context scales from general to specific.

Root defines global conventions.

Module-level files define local invariants.

Feature-level files encode edge-case constraints.

This reduces irrelevant context and increases precision.

It also mirrors how humans reason about codebases.

Compatibility Across Tools

A standard file location matters.

Some agents natively read AGENTS.md. Others require simple compatibility mechanisms such as symlinks that mirror AGENTS.md into tool-specific filenames.

The key idea is a single source of truth.

Do not maintain multiple divergent instruction files. Normalize on AGENTS.md and bridge outward if needed.

The goal is repository-level portability. Change tools without losing institutional knowledge.

Best Practices for Agent Instructions

To make AGENTS.md effective, follow these constraints.

Write imperatively.

Use direct commands. Avoid narrative descriptions.

Avoid redundancy.

Do not duplicate README content. Reference it.

Keep it operational.

Focus on what the agent must do, not why the project exists.

Update it as the code evolves.

If the build process changes, AGENTS.md must change.

Treat violations as signal.

If agents consistently ignore documented rules, either the instruction is unclear or the file is too long and context is being truncated. Reset sessions and re-anchor.

AGENTS.md is not static documentation. It is part of the execution surface.

Ownership and Governance

If agents are contributors, then their instruction layer requires ownership.

Each module-level AGENTS.md should be maintained by the same engineers responsible for that module. Changes to these files should follow the same review rigor as code changes.

Instruction drift is as dangerous as code drift.

Version-controlled agent guidance becomes part of your engineering contract.

Why Teams Are Adopting AGENTS.md

Repositories across the industry have begun implementing AGENTS.md as a first-class artifact. Large infrastructure projects, developer tools, SDKs, and platform teams are standardizing on this pattern.

The motivation is consistent:

- Eliminate tool lock-in

- Preserve institutional knowledge

- Reduce hallucination caused by ambiguous workflows

- Enable predictable agent behavior across environments

AGENTS.md transforms prompt engineering from a personal habit into a shared, reviewable, versioned discipline.

Vercel published evaluation results showing that repository-level AGENTS.md context outperformed tool-specific skills in agent benchmarks.

Why This Matters Now

AI agents are rapidly becoming embedded in daily development workflows.

Without a standardized instruction layer:

- Output quality varies by developer setup

- Context decays across sessions

- Hidden assumptions leak into production code

- Scaling agent usage multiplies inconsistency

The repository must become the stable contract between humans and machines.

AGENTS.md is the first structural step toward that contract.

It shifts agent collaboration from ad hoc prompting to engineered context.

Foundation Before Optimization

In the next post, we will examine a different failure mode.

Even with a perfectly structured AGENTS.md, long AI sessions degrade. Context accumulates. Signal dilutes. Hallucinations increase. Performance drops as token counts rise.

This phenomenon is often invisible until it causes subtle architectural damage.

Part 2 will focus on defeating context rot and enforcing session discipline using structured planning, checkpoints, and meta-prompting.

Before you scale orchestration.

Before you add subagents.

Before you optimize cost across multiple model providers.

You must first stabilize the environment.

An agent-native repository is the foundation.

Everything else builds on top of it.

When Faster Code Starts to Break the Delivery System

Over the last few years, something fundamental has changed in software development.

If the early 2020s were about adopting AI coding assistants, the next phase is about what happens after those tools accelerate development. Teams are producing code faster than ever. But what I’m hearing from engineering leaders is a different question:

What’s going to break next?

That question is exactly what led us to commission our latest research, State of DevOps Modernization 2026. The results reveal a pattern that many practitioners already sense intuitively: faster code generation is exposing weaknesses across the rest of the software delivery lifecycle.

In other words, AI is multiplying development velocity, but it’s also revealing the limits of the systems we built to ship that code safely.

The Emerging “Velocity Paradox”

One of the most striking findings in the research is something we’ve started calling the AI Velocity Paradox - a term we coined in our 2025 State of Software Engineering Report.

Teams using AI coding tools most heavily are shipping code significantly faster. In fact, 45% of developers who use AI coding tools multiple times per day deploy to production daily or faster, compared to 32% of daily users and just 15% of weekly users.

At first glance, that sounds like a huge success story. Faster iteration cycles are exactly what modern software teams want.

But the data tells a more complicated story.

Among those same heavy AI users:

- 69% report frequent deployment problems when AI-generated code is involved

- Incident recovery times average 7.6 hours, longer than for teams using AI less frequently

- 47% say manual downstream work, QA, validation, remediation has become more problematic

What this tells me is simple: AI is speeding up the front of the delivery pipeline, but the rest of the system isn’t scaling with it. It’s like we are running trains faster than the tracks they are built for. Friction builds, the ride is bumpy, and it seems we could be on the edge of disaster.

The result is friction downstream, more incidents, more manual work, and more operational stress on engineering teams.

Why the Delivery System Is Straining

To understand why this is happening, you have to step back and look at how most DevOps systems actually evolved.

Over the past 15 years, delivery pipelines have grown incrementally. Teams added tools to solve specific problems: CI servers, artifact repositories, security scanners, deployment automation, and feature management. Each step made sense at the time.

But the overall system was rarely designed as a coherent whole.

In many organizations today, quality gates, verification steps, and incident recovery still rely heavily on human coordination and manual work. In fact, 77% say teams often have to wait on other teams for routine delivery tasks.

That model worked when release cycles were slower.

It doesn’t work as well when AI dramatically increases the number of code changes moving through the system.

Think of it this way: If AI doubles the number of changes engineers can produce, your pipelines must either:

- cut the risk of each change in half, or

- detect and resolve failures much faster.

Otherwise, the system begins to crack under pressure. The burden often falls directly on developers to help deploy services safely, certify compliance checks, and keep rollouts continuously progressing. When failures happen, they have to jump in and remediate at whatever hour.

These manual tasks, naturally, inhibit innovation and cause developer burnout. That’s exactly what the research shows.

Across respondents, developers report spending roughly 36% of their time on repetitive manual tasks like chasing approvals, rerunning failed jobs, or copy-pasting configuration.

As delivery speed increases, the operational load increases. That burden often falls directly on developers.

What Organizations Should Do Next

The good news is that this problem isn’t mysterious. It’s a systems problem. And systems problems can be solved.

From our experience working with engineering organizations, we've identified a few principles that consistently help teams scale AI-driven development safely.

1. Standardize delivery foundations

When every team builds pipelines differently, scaling delivery becomes difficult.

Standardized templates (or “golden paths”) make it easier to deploy services safely and consistently. They also dramatically reduce the cognitive load for developers.

2. Automate quality and security checks earlier

Speed only works when feedback is fast.

Automating security, compliance, and quality checks earlier in the lifecycle ensures problems are caught before they reach production. That keeps pipelines moving without sacrificing safety.

3. Build guardrails into the release process

Feature flags, automated rollbacks, and progressive rollouts allow teams to decouple deployment from release. That flexibility reduces the blast radius of new changes and makes experimentation safer.

It also allows teams to move faster without increasing production risk.

4. Remember measurement, not just automation

Automation alone doesn’t solve the problem. What matters is creating a feedback loop: deploy → observe → measure → iterate.

When teams can measure the real-world impact of changes, they can learn faster and improve continuously.

The Next Phase of AI in Software Delivery

AI is already changing how software gets written. The next challenge is changing how software gets delivered.

Coding assistants have increased development teams' capacity to innovate. But to capture the full benefit, the delivery systems behind them must evolve as well.

The organizations that succeed in this new environment will be the ones that treat software delivery as a coherent system, not just a collection of tools.

Because the real goal isn’t just writing code faster. It’s learning faster, delivering safer, and turning engineering velocity into better outcomes for the business.

And that requires modernizing the entire pipeline, not just the part where code is written.

From Artifact Storage to Supply Chain Control: Rethinking Artifact Management with Harness

From Artifact Storage to Supply Chain Control: Rethinking Artifact Management with Harness

Harness Artifact Registry marks an important milestone as it evolves from universal artifact management into an active control point for the software supply chain. With growing enterprise adoption and new security and governance capabilities, Artifact Registry is helping teams block risky dependencies before they reach the pipeline, reduce supply chain exposure, and scale artifact management without slowing developers down.

In little over a year, Harness Artifact Registry has grown from early discovery to strong enterprise adoption, supporting a wide range of artifact formats, enterprise-scale storage, and high-throughput CI/CD pipelines across both customers and internal teams. What started as a focused initiative inside Harness has evolved into a startup within a startup, quickly becoming a core pillar of the Harness platform.

Today, we’re sharing how Artifact Registry is helping organizations scale software delivery by simplifying artifact management, strengthening supply chain security, and improving developer experience and where we’re headed next.

Building a Modernized, Cloud Native Artifact Management

In customer conversations, one theme came up repeatedly: as organizations scale CI/CD, artifacts multiply fast. Containers, packages, binaries, Helm charts, and more end up spreading across fragmented tools with inconsistent controls. Teams don't want just another registry. They want one trusted system, deeply integrated with CI/CD, that can scale globally and enforce policy by default. That's exactly what the Artifact Registry was built to be. By embedding artifact management directly into the Harness platform, it reduces tooling sprawl while giving platform engineering, DevOps, and AppSec teams centralized visibility and control, without slowing developers down.

Artifact Registry: A Unified Home for Every Artifact

Today, Artifact Registry supports a growing ecosystem of artifact types, with Docker, Helm (OCI), Generic, Python, npm, Go, NuGet, Dart, Conda, PHP Composer, and AI artifacts now available, and more on the way. With Artifact Registry, teams can:

- Centralize all artifacts across CI and CD in one platform-native registry

- Scale globally with multi-region replication for performance and resilience

- Simplify migration with built-in tooling to move from existing registries

- Deliver faster, more reliable artifact pulls across environments

The business impact is already clear. Artifact Registry has quickly gained traction with several enterprise customers, driven by strong platform integration, low-friction adoption, and the advantage of having artifact management natively embedded within the CI/CD platform.

One early customer managing artifacts across Docker, Helm, Python, NPM, Go, and more has standardized on Harness Artifact Registry, achieving 100% CI adoption across teams and pipelines.

“Harness Artifact Registry is stable, performant, and easy to trust at scale, delivering faster and more reliable artifact pulls than our previous vendor”

— SRE Lead

By unifying artifact storage with the rest of the software delivery lifecycle, Artifact Registry simplifies operations while helping teams focus on shipping software.

Shifting from Passive Storage to Active Governance

Software supply chain threats have become both more frequent and more sophisticated. High-profile incidents like the SolarWinds breach, where attackers injected malicious code into trusted update binaries affecting thousands of organizations, exposed how deeply a compromised artifact can penetrate enterprise systems. More recently, the Shai-Hulud 2.0 campaign saw self-propagating malware compromise hundreds of npm packages and tens of thousands of downstream repositories, harvesting credentials and spreading automatically through development environments.

As these attacks show, risk doesn’t only exist after a build, it can be embedded long before artifacts reach CI/CD pipelines. That’s why Harness Artifact Registry was designed with governance at its core.

Blocking Risky Dependencies Before They Reach Your Pipeline

Harness Artifact Registry includes Dependency Firewall, a control point that allows organizations to govern which dependencies are allowed into their environment in the first place. Rather than relying on downstream scans after a package has already been pulled into CI/CD, Dependency Firewall evaluates dependency requests at ingest using policy-based controls.

This allows teams to proactively block risky artifacts before they are ever downloaded. Organizations can prevent the use of dependencies with known CVEs or license violations, blocking risky dependencies before they reach your pipeline, and restrict access to untrusted or unsafe upstream sources by default. The result is earlier risk reduction, fewer security exceptions later in the pipeline, and stronger alignment between AppSec and development teams without slowing delivery.

[Dependency Firewall Explainer Video]

Automatically Blocking Risky Artifacts Before Deployment

To further strengthen supply chain protection, Artifact Registry provides built-in artifact quarantine, allowing organizations to automatically block artifacts that fail security or compliance checks. Quarantined artifacts cannot be downloaded or deployed until they meet defined policy requirements, helping teams stop risk before it moves downstream. All quarantine actions are policy-driven, fully auditable, and governed by RBAC, ensuring that only authorized users or systems can quarantine or release artifacts.

Integrating Security into Existing Scanning Workflows

Rather than forcing teams to replace the tools they already use, Harness Artifact Registry is built to fit into real-world security workflows by unifying scanning and governance at the registry layer. Today, Artifact Registry includes built-in scanning powered by Aqua Trivy for vulnerabilities, license issues, and misconfigurations, and integrates with over 40 security scanners, including tools like Wiz, for container, SCA, and compliance checks. Teams can orchestrate these scans directly in their CI pipelines, with scan results feeding into policy evaluation to automatically determine whether an artifact is released or quarantined.

Artifact Registry also exposes APIs that allow external security and ASPM platforms to trigger quarantine or release actions based on centralized policy decisions. Together, these capabilities enable organizations to enforce consistent, policy-driven controls early, stop risky artifacts before they move downstream, and connect artifact governance to broader enterprise security workflows all without slowing down developers.

How Artifact Registry Is Transforming Software Delivery

As organizations scaled, legacy registries have become bottlenecks disconnected from CI/CD, security, and governance workflows. Harness takes a different approach. Because Artifact Registry is natively integrated into the Harness platform, teams benefit from:

- Native CI/CD integration with no extra tooling

- Fast and seamless adoption for existing Harness customers

- Shared visibility across Platform, DevOps, and AppSec teams

- Security enforced early through built-in governance and Dependency Firewall

This tight integration has accelerated adoption by removing friction from day-to-day workflows. Teams are standardizing how artifacts are secured, distributed, and governed across the software delivery lifecycle, while keeping developer workflows fast and familiar.

What’s Next for Artifact Registry?

Harness Artifact Registry was built to modernize artifact management for the enterprise, combining high-performance distribution with built-in security, governance, and visibility. We’ve continued to invest in a platform designed to scale with modern delivery pipelines and we’re just getting started.

Looking ahead, we’re expanding Artifact Registry in three key areas:

Package Ecosystem Expansion

Support is coming for Alpine, Debian, Swift, RubyGems, Conan, and Terraform packages, enabling teams to standardize more of their software supply chain on a single platform.

Governance, Security, and Operational Control

We’re investing in artifact lifecycle management, immutability, audit logging, storage quota controls, and deeper integration with Harness Security Solutions.

AI, Visibility, and Integrations

Upcoming capabilities include semantic artifact discovery, custom dashboards, AI-powered chat, OSS gatekeeper agents, and deeper integration with Harness Internal Developer Portal.

Modern software delivery demands clear control over how software is built, secured, and distributed. As supply chain threats increase and delivery velocity accelerates, organizations need earlier visibility and enforcement without introducing new friction or operational complexity.

We invite you to sign up for a demo and see firsthand how Harness Artifact Registry delivers high-performance artifact distribution with built-in security and governance at scale.

API Failure: 7 Causes and How to Fix Them

What Is an API Failure?

An API failure is any response that doesn’t conform to the system’s expected behavior being invoked by the client. One example is when a client makes a request to an API that is supposed to return a list of users but returns an empty list (i.e., {}). A successful response must have a status code in the 200 series. An unsuccessful response must have either an HTTP error code or a 0 return value.

What Are the Common API Error Codes?

An API will raise an exception if it can’t process a client request correctly. The following are the common error codes and their meanings:

- 400 Bad Request: This error occurs when the client request is malformed or cannot be processed by your API.

- 401 Unauthorized: This error occurs when an API key is missing or incorrectly entered.

- 403 Forbidden: This error occurs when a user tries to access a resource they don’t have permission to see.

- 404 Not Found: This error, also known as a File Not Found error, rarely has anything to do with the API itself but instead with the underlying system (for example, if trying to access a file that doesn’t exist on the server). This is usually related to something else and not directly related to your API code.

- 500 Internal Server: This error occurs when your server can’t respond to a request from a user or can’t find some data (for example, you’re trying to access any post, but none of the posts exist for the given ID).

What Causes API Failure?

An API failure can happen because of issues with the endpoints like network connectivity, latency, and load balancing issues. The examples below may give you a good understanding of what causes an API failure.

1. Incorrect API Permissions

Some APIs are better left locked down to those who need access and are only available to those using an approved key. However, when you don’t set up the correct permissions for users, you can impede the application’s basic functionality. If you’re using an external API, like Facebook, Twitter, or even Google Analytics, make sure you’re adding the permissions for your users to access the data they need. Also, keep on top of any newly added features that can increase security risks.

How to Fix Incorrect API Permissions

If you’re leveraging external APIs requiring extra configuration, get the correct API key so the app has the proper permissions. Also, provide your clients with API keys relevant to their authorization levels. Thus, your users will have the correct permissions and will seamlessly access your application.

2. Unsecured Endpoints and Data Access Tokens

We’ve all seen it happen a million times: someone discovers an API that’s exposed to everyone after gaining user consent. Until now, this was usually reasonably benign, but when credentials are leaked, things can get ugly fast, and companies lose brand trust. The biggest problem here is keeping admins from having unsecured access to sensitive data.

How to Fix Unsecured Endpoints and Data Access Tokens

Using a secure key management system that includes the “View Keys” permission for the account will help mitigate this risk. For example, you could use AWS Key Management Service (AWS KMS) to help you manage and create your encryption keys. If you can’t protect your keys, then at the very least, include a strong master password that all users can access, and only give out these keys when needed.

3. Invalid Session Management

Untrusted tokens and session variables can cause problems for how a website functions, causing timing issues with page loads and login calls or even creating a denial of service, which can harm the end-user experience and your brand.

How to Fix Invalid Session Management

The best way to secure sensitive data is by using token authentication, which will encode user data into the token itself based on time/date stamps. You can then enforce this to ensure that whenever you reissue tokens, they expire after a set amount of time or use them for API requests only. As for session variables, these are usually created based on your authentication keys and should be handled the same way as your privileged keys—with some encryption. And keep the source of your keys out of the hands of anyone who can access them.

4. Expiring APIs

If you’re using an API to power a website, you must upload new data in real time or save it to a cache for later use. When you set an expiry time for an API and fail to update, you make it unavailable. When a user or application tries to access it after the expiry, they get a 404 or 500 error.

How to Fix Expiring APIs

You should use a middle ground option—a proxy API. This will allow you to cache your data before you make it available and only allow access to the correct bits of the APIs as needed. You should also schedule tasks that run daily to import updated data and bring it into your system.

5. Bad URLs

This one isn’t necessarily a mistake, but it happens from time to time when developers aren’t careful about how they name things or if they’re using an improper URL structure for their API endpoints. When the URL structure is too complex or has invalid characters, you will get errors and failures. Look at some examples of bad URL structure: “http://example.com/api/v1?mode=get” The above structure is bad because the "?" character filters a single parameter, not the type of request. The default request type is GET; thus, a better URL would look like this: “http://example.com/api/v1”

How to Fix Bad URLs

Remove any unsafe characters in your URL, like angle brackets (<>). You use angle brackets as delimiters in your URL. Also, design the API to make it more friendly for users. For example, this URL "https://example.com/users/name" tells users they’re querying the names of users, unlike this URL "https://example.com/usr/nm" It’s also good practice to use a space after the “?” in your API URL because otherwise, people can mistakenly think that the space is part of a query string.

6. Overly Complex API Endpoints

This happens when trying to build multiple ways of accessing multiple applications. You do this by relying on generic endpoints instead of target audiences and specific applications. Creating a lot of different paths for the same data results in non-intuitive routes.

How to Fix Overly Complex API Endpoints

There are several ways to go about this, but for most, you want to use a network proxy system that can handle the different data access methods and bring it all into one spot. This will help minimize potential issues with your APIs routes and help with user confusion and brand damage.

7. Exposed APIs on IPs

This can happen when organizations are not properly securing their public IP addresses, or there is no solid monitoring process. This exposes your assets by providing easy access to anyone. Exposed IPs make your application vulnerable to DDoS attacks and other forms of abuse or phishing.

How to Fix Exposed APIs on IPs

Make sure you properly manage your IP addresses and have a solid monitoring system. You must block all IPv6 traffic and enforce strict firewall rules on your network. You should only allow service access through secure transport methods like TLS.

Conclusion

API errors are a plague on the internet. Sometimes they come as very poor performance that can produce long response times and bring down APIs, or they can be network-related and cause unavailable services. They’re often caused by problems such as inconsistent resource access errors, neglect in proper authentication checks, faulty authentication data validation on endpoints, failure to read return codes from an endpoint, etc. Once organizations recognize what causes API failures and how to mitigate them, they seek web application and API protection (WAAP) platforms to address the security gaps. Harness WAAP by Traceable helps you analyze and protect your application from risk and thus prevent failures.

About Traceable

Harness WAAP is the industry’s leading API security platform that identifies APIs, evaluates API risk posture, stops API attacks, and provides deep analytics for threat hunting and forensic research. With visual depictions of API paths at the core of its technology, its platform applies the power of distributed tracing and machine learning models for API security across the entire software development lifecycle. Book a demo today.

Argo CD Install: Helm-Based Setup for Enterprise DevOps Teams

What You’re Installing (and Why Enterprises Standardize on Argo CD)

Argo CD is a Kubernetes-native continuous delivery controller that follows GitOps principles: Git is the source of truth, and Argo CD continuously reconciles what’s running in your cluster with what’s declared in Git.