Featured Blogs

.png)

Google's GKE Autopilot provides fully managed Kubernetes without the operational overhead of node management, security patches, or capacity planning. However, running chaos engineering experiments on Autopilot has been challenging due to its security restrictions.

We've solved that problem.

Why This Matters

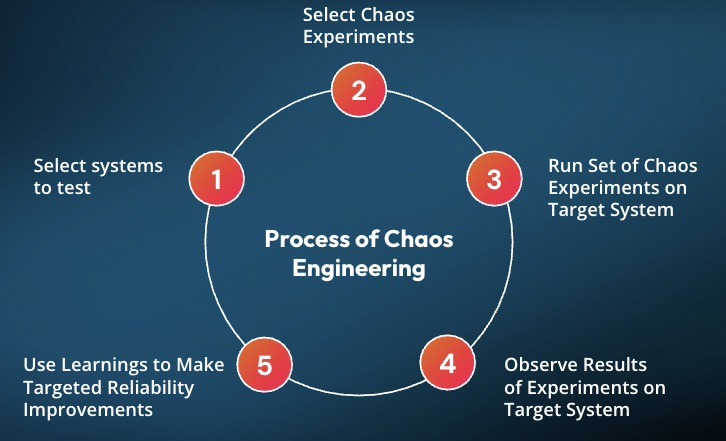

Chaos engineering helps you identify issues before they impact your users. The approach involves intentionally introducing controlled failures to understand how your system responds. Think of it as a fire drill for your infrastructure.

GKE Autopilot secures clusters by restricting many permissions, which is excellent for security. However, this made running chaos experiments difficult. You couldn't simply deploy Harness Chaos Engineering and begin testing.

That changes today.

What Changed

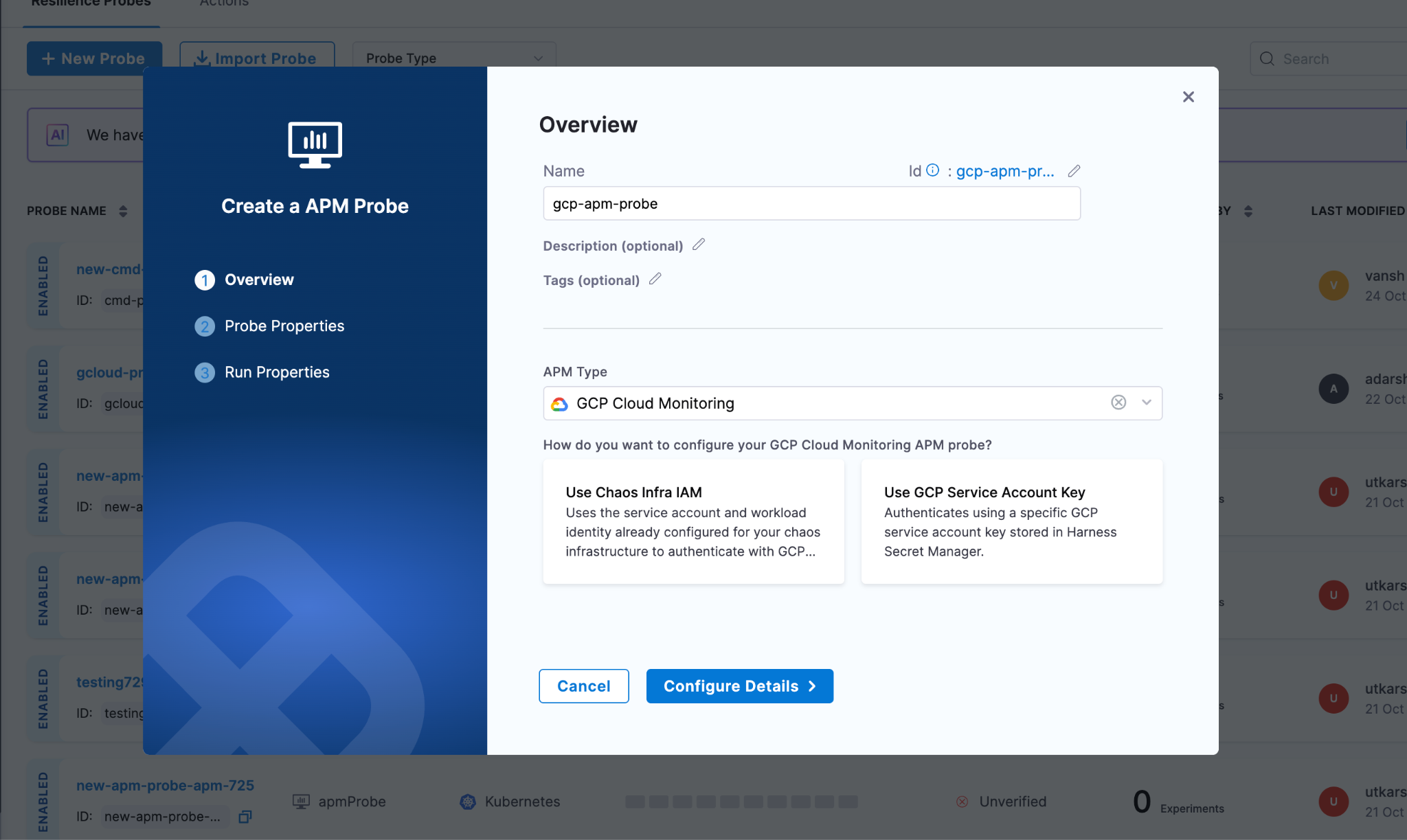

We collaborated with Google to add Harness Chaos Engineering to GKE Autopilot's official allowlist. This integration enables Harness to run chaos experiments while operating entirely within Autopilot's security boundaries.

No workarounds required. Just chaos engineering that works as expected.

How to Set It Up

1. Apply the Allowlist

First, you need to tell GKE Autopilot that Harness chaos workloads are okay to run. Copy this command:

kubectl apply -f - <<'EOF'

apiVersion: auto.gke.io/v1

kind: AllowlistSynchronizer

metadata:

name: harness-chaos-allowlist-synchronizer

spec:

allowlistPaths:

- Harness/allowlists/chaos/v1.62/*

- Harness/allowlists/service-discovery/v0.42/*

EOF

Then wait for it to be ready:

kubectl wait --for=condition=Ready allowlistsynchronizer/harness-chaos-allowlist-synchronizer --timeout=60s

That's it for the cluster configuration.

2. Enable Autopilot Mode in Harness

Next, configure Harness to work with GKE Autopilot. You have several options:

If you're setting up chaos for the first time, just use the 1-click chaos setup and toggle on "Use static name for configmap and secret" during setup.

If you already have infrastructure configured, go to Chaos Engineering > Environments, find your infrastructure, and enable that same toggle.

You can also set this up when creating a new discovery agent, or update an existing one in Project Settings > Discovery.

What You Can Test

You can run most of the chaos experiments you'd expect:

The integration supports a comprehensive range of chaos experiments:

Resource stress: Pod CPU Hog, Pod Memory Hog, Pod IO Stress, Disk Fill. These experiments help you understand how your pods behave under resource constraints.

Network chaos: Pod Network Latency, Pod Network Loss, Pod Network Corruption, Pod Network Duplication, Pod Network Partition, Pod Network Rate Limit. Production networks experience imperfections, and your application needs to handle them gracefully.

DNS problems: Pod DNS Error to disrupt resolution, Pod DNS Spoof to redirect traffic.

HTTP faults: Pod HTTP Latency, Pod HTTP Modify Body, Pod HTTP Modify Header, Pod HTTP Reset Peer, Pod HTTP Status Code. These experiments test how your APIs respond to unexpected behavior.

API-level chaos: Pod API Block, Pod API Latency, Pod API Modify Body, Pod API Modify Header, Pod API Status Code. Good for testing service mesh and gateway behavior.

File system chaos: Pod IO Attribute Override, Pod IO Error, Pod IO Latency, Pod IO Mistake. These experiments reveal how your application handles storage issues.

Container lifecycle: Container Kill and Pod Delete to test recovery. Pod Autoscaler to see if scaling works under pressure.

JVM chaos if you're running Java: Pod JVM CPU Stress, Pod JVM Method Exception, Pod JVM Method Latency, Pod JVM Modify Return, Pod JVM Trigger GC.

Database chaos for Java apps: Pod JVM SQL Exception, Pod JVM SQL Latency, Pod JVM Mongo Exception, Pod JVM Mongo Latency, Pod JVM Solace Exception, Pod JVM Solace Latency.

Cache problems: Redis Cache Expire, Redis Cache Limit, Redis Cache Penetration.

Time manipulation: Time Chaos to introduce controlled time offsets.

What This Means for You

If you're running GKE Autopilot and want to implement chaos engineering with Harness, you can now do both without compromise. There's no need to choose between Google's managed experience and resilience testing.

For teams new to chaos engineering, Autopilot provides an ideal starting point. The managed environment reduces infrastructure complexity, allowing you to focus on understanding application behavior under stress.

Getting Started

Start with a simple CPU stress test. Select a non-critical pod and run a low-intensity Pod CPU Hog experiment in Harness. Observe the results: Does your application degrade gracefully? Do your alerts trigger as expected? Does it recover when the experiment completes?

Start small, understand your system's behavior, then explore more complex scenarios.

You can configure Service Discovery to visualize your services in Application Maps, add probes to validate resilience during experiments, and progressively explore more sophisticated fault injection scenarios.

Check out the documentation for the complete setup guide and all supported experiments.

The goal of chaos engineering isn't to break things. It's to understand what breaks before it impacts your users.

Latest Blogs

An Introduction to Disaster Recovery Testing: What You Need to Know in 2026

Businesses today run on computers, cloud systems, and digital tools. One big failure can stop everything. A cyber attack, a power outage, or a software glitch can shut down operations for hours or days. Disaster recovery testing is how you prove you can restore critical services when the unexpected happens.

In 2026, with hybrid and multi-cloud estates, distributed data, and tighter oversight, this is not a once-a-year fire drill. It is a continuous discipline that validates plans, uncovers weak links before they cause outages, and gives leaders confidence that customer-facing and internal systems can bounce back on demand.

Disaster recovery testing is a simple way to practice getting your systems back online after something goes wrong. It checks if your backup plans actually work before a real problem hits. This blog gives you a clear, step-by-step look at what it is, why it is essential right now, and how to get started.

What Is Disaster Recovery Testing?

Disaster recovery testing is a structured way to confirm that systems, data, and services can be restored to meet defined recovery goals after a disruption. The mandate is simple: verify that recovery works as designed and within the time and data loss thresholds the business requires. Effective programs test more than technology. They exercise people, processes, communications, and third-party dependencies end to end. The goal is to prove you can bring back data, apps, and services quickly with little loss.

A strong disaster recovery test plan typically covers:

- Clear recovery time objectives (RTOs) and recovery point objectives (RPOs) for each application tier.

- A current asset and application inventory with criticality tiers and upstream/downstream dependencies.

- Documented runbooks and playbooks for failover and failback, including decision criteria.

- Data protection strategies such as backups, replication, and snapshots with defined retention and immutability.

- Communication plans for internal teams, executives, customers, and partners.

- Roles and responsibilities, escalation paths, and an incident command structure.

- Third-party and vendor recovery commitments, service level agreements, and contact procedures.

- Metrics, governance, and reporting for audits and continuous improvement.

Without regular tests, even the best plan stays unproven. Many companies learn this the hard way when an outage lasts longer than expected.

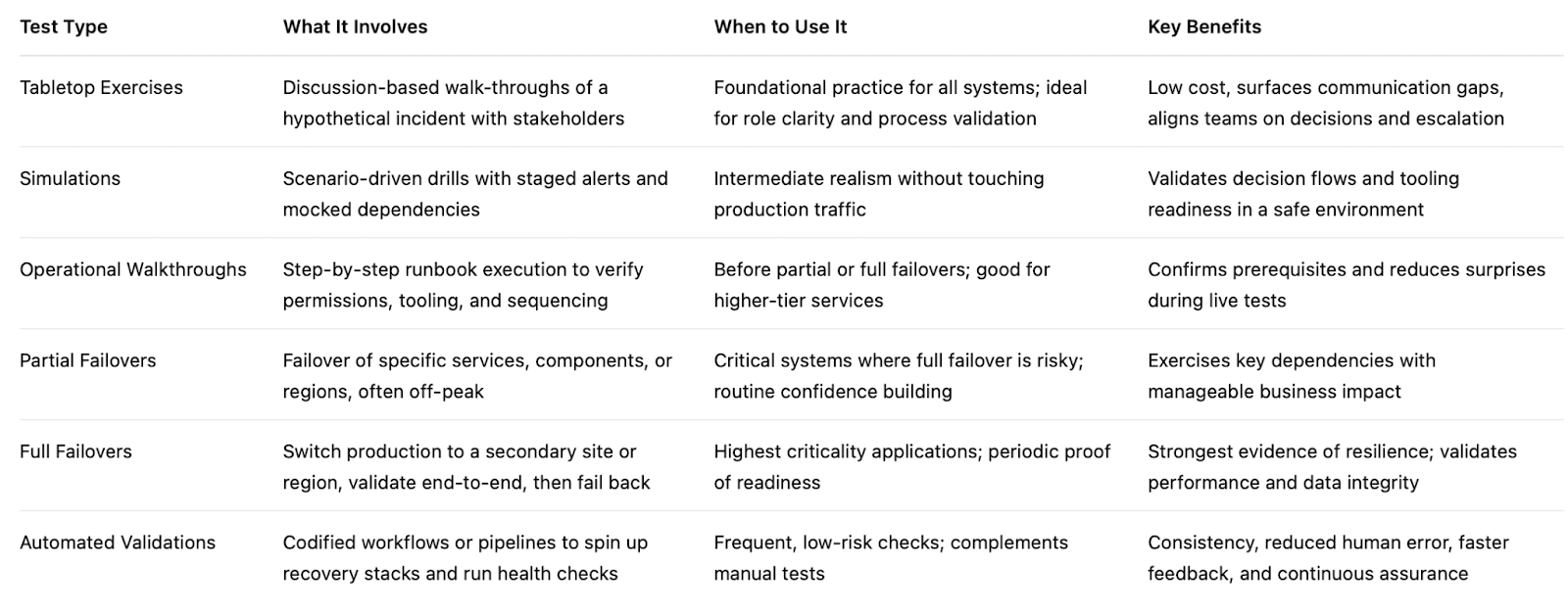

Types of Disaster Recovery Tests

Different systems require different levels of validation based on their criticality, risk, and business impact. A layered testing strategy helps teams build confidence gradually starting with low-risk discussions and moving toward full-scale failovers.

By combining multiple types of tests, organizations can validate both technical recovery and team readiness without unnecessary disruption.

Tabletop Exercises:

Tabletop exercises are discussion-based sessions where stakeholders walk through a hypothetical disaster scenario step by step. These are typically the starting point for any disaster recovery program, as they help clarify roles, responsibilities, and decision-making processes. While they do not involve actual system changes, they are highly effective in identifying communication gaps and aligning teams on escalation paths.

Simulations:

Simulations introduce more realism by creating scenario-driven drills with staged alerts and mocked dependencies. Teams respond as if a real incident is happening, but without impacting production systems. This type of testing is useful for validating how teams react under pressure and ensuring that tools, alerts, and workflows function as expected in a controlled environment.

Operational Walkthroughs:

Operational walkthroughs involve executing recovery runbooks step by step to verify that all prerequisites such as permissions, tooling, and sequencing are in place. These tests are more hands-on than simulations and are often conducted before attempting partial or full failovers. They help reduce surprises by ensuring that recovery procedures are practical and executable.

Partial Failovers:

Partial failovers test the recovery of specific services, components, or regions, usually during off-peak hours. This approach allows teams to validate critical dependencies and recovery workflows without risking the entire system. It is especially useful for building confidence in complex environments where a full failover may be too risky or costly to perform frequently.

Full Failovers:

Full failovers are the most comprehensive form of disaster recovery testing, where production systems are completely switched to a secondary site or region. After validation, systems are failed back to the primary environment. These tests provide the strongest proof of resilience, as they validate end-to-end recovery, including performance and data integrity, but they require careful planning due to their potential impact.

Automated Validations:

Automated validations use codified workflows or pipelines to continuously test recovery processes. These tests can automatically spin up recovery environments, validate configurations, and run health checks. They are ideal for frequent, low-risk testing and help reduce human error while providing fast and consistent feedback. Over time, automation becomes a key driver for maintaining continuous assurance in disaster recovery readiness.

Here’s the table outlines the primary types of disaster recovery testing and where they fit.

If you are building a disaster recovery testing checklist, include a mix of these types of disaster recovery testing and map each to the systems they protect. Over time, increase the frequency of automated validations and reserve full failovers for the highest-value services.

Why Disaster Recovery Testing Matters in 2026

The world is more connected than ever. Companies rely on cloud services, remote teams, and AI tools. At the same time, threats keep growing. Cyber attacks like ransomware are more common. Natural events and supply chain problems add extra risk. Cloud systems can fail without warning.

Recent studies show the cost of downtime keeps rising. For many large companies, one hour of downtime can cost more than 300,000 dollars. Some industries see losses climb into the millions per hour. Smaller businesses lose thousands per minute in lost sales and unhappy customers.

In 2026, experts note that most organizations still test their recovery plans only once or twice a year. That is not enough. Systems change fast. New software updates, new cloud setups, and new team members can break old plans.

Regular testing gives you confidence. It cuts recovery time and protects revenue. It also helps meet rules from banks, healthcare groups, and government agencies that require proof of preparedness.

How Modern Tools Make Disaster Recovery Testing Easier

Traditional testing took weeks of manual work. Today, platforms combine different testing methods in one place. This approach saves time and gives better results.

For example, Harness recently released its Resilience Testing module. It brings together chaos testing (to inject real-world failures safely), load testing (to check performance under stress), and disaster recovery testing. You can run everything inside your existing pipelines. This means you can test recovery steps automatically, validate failovers, and spot risks early.

Teams using this kind of integrated platform report faster recovery times and fewer surprises. It fits right into daily development work instead of feeling like an extra project.

The Role of AI in Disaster Recovery Testing

Artificial intelligence is making disaster recovery testing much smarter in 2026. It turns testing from a once-a-year chore into something fast, ongoing, and more accurate.

AI helps teams spot problems early by analyzing system data and predicting where failures might happen, allowing issues to be fixed before they cause real damage. It also enables continuous and automated testing, running scenarios in the background without interrupting normal business operations. Instead of manually creating test plans, AI can generate and recommend the most relevant scenarios based on your actual system setup, saving time and improving coverage.

Another major advantage is how quickly AI can analyze results. It processes test outcomes in real time and clearly points out what needs to be fixed, removing the guesswork. Over time, it learns from every test run and continuously improves your disaster recovery strategy, making it more reliable with each iteration.

Overall, AI helps teams recover faster and with fewer mistakes. Rather than relying on assumptions, teams get clear, data-driven insights to strengthen their systems. Tools like the Resilience Testing module from Harness already bring these capabilities into practice by combining chaos testing, load testing, and disaster recovery testing. With AI built into the platform, it can recommend the right tests, automate execution, and provide simple, actionable steps to improve system resilience.

Conclusion

Disaster recovery testing is not a one-time task. It is an ongoing habit that protects your business in 2026 and beyond. The companies that test regularly recover faster, lose less money, and keep customer trust.

Take a moment now to review your current plan. Pick one critical system and schedule a simple test this quarter. If you want a modern way to make the process simple and powerful, look at solutions like the Resilience Testing module from Harness. It helps you combine multiple testing types and use AI so you stay ready no matter what comes next.

Your business depends on technology. Make sure that technology can bounce back when it counts. Start testing today and build the confidence your team needs for whatever 2026 brings.

Why DR Testing Can No Longer Be an Afterthought

Resilience Is Not a Feature — It Is a Business Imperative

In today's digital economy, every organisation's revenue, reputation, and customer trust is inextricably linked to the uptime of its cloud-based services. From banking and payments to logistics and healthcare, a cloud outage is no longer just an IT problem — it is a business crisis. Despite this reality, Disaster Recovery (DR) testing remains one of the most neglected disciplines in enterprise technology operations.

Most organisations have a DR plan. Far fewer test it regularly. And even fewer have the tools to simulate realistic failure scenarios with the confidence needed to validate that their recovery objectives — Recovery Time Objectives (RTO) and Recovery Point Objectives (RPO) — are actually achievable when it matters most.

A DR plan that has never been tested is not a plan — it is a hypothesis. And in the event of a real disaster, a hypothesis is not good enough.

The question is no longer whether disasters will happen to cloud infrastructure. The question is whether your organisation is prepared to survive them — and emerge with your business services intact.

A New Era of Risk: When War Comes to the Cloud

March 1, 2026 — A Watershed Moment for the Cloud Industry

On March 1, 2026, something unprecedented happened: physical warfare directly struck hyperscale cloud infrastructure. Drone strikes — part of Iran's retaliatory campaign following the joint U.S.-Israeli Operation Epic Fury — hit three Amazon Web Services (AWS) data centers in the United Arab Emirates and Bahrain. It marked, according to the Uptime Institute, the first confirmed military attack on a hyperscale cloud provider in history.

AWS confirmed that two facilities in the UAE were directly struck in the ME-CENTRAL-1 region, while a third in Bahrain sustained damage from a nearby strike. The attacks caused structural damage, disrupted power delivery, and triggered fire suppression systems that produced additional water damage to critical equipment. Two of the three availability zones in the UAE region were knocked offline simultaneously — a scenario that defeated standard redundancy models designed for hardware failures and natural disasters, not military strikes.

"Teams are working around the clock on availability." — AWS CEO Matt Garman, speaking to CNBC on the drone strike impacts.

The Ripple Effect: From Data Centers to Digital Services

The cascading business impact was immediate and wide-ranging. Ride-hailing and delivery platform Careem went dark. Payments companies Alaan and Hubpay reported their apps going offline. UAE banking giants — Emirates NBD, First Abu Dhabi Bank, and Abu Dhabi Commercial Bank — reported service disruptions to customers. Enterprise data company Snowflake attributed elevated error rates in the region directly to the AWS outage. Investing platform Sarwa was also impacted.

AWS subsequently urged all affected customers to activate their disaster recovery plans and migrate workloads to other AWS regions. For many organisations, that recommendation revealed an uncomfortable truth: they had workloads running in a conflict zone without knowing it, and they had DR plans that had never been meaningfully tested.

The event was not merely a localised incident. It sent shockwaves through global financial markets, triggered fresh concerns about cloud infrastructure security, and forced technology and business leaders worldwide to confront a question they had been deferring: are we actually prepared for a regional cloud failure?

The Uncomfortable Truth About Cloud Dependency

AWS is, by any measure, the world's most reliable cloud platform. With a global network of regions, availability zones, and decades of engineering investment in fault tolerance, it represents the gold standard of cloud infrastructure. And yet — disasters still happen.

The Middle East drone strikes illustrate a new class of risk that sits entirely outside the traditional taxonomy of cloud failure modes. Hardware faults, software bugs, network misconfigurations, and even natural disasters are all scenarios that cloud providers engineer against. But a sustained, multi-facility military attack that simultaneously disables multiple availability zones in a region is a different beast entirely.

Even the most reliable cloud provider cannot guarantee immunity from geopolitical events, physical infrastructure attacks, or large-scale regional disruptions. DR planning must account for the full spectrum of failure scenarios.

For enterprises that depended on AWS's Middle East regions — whether knowingly for local operations or unknowingly through traffic routing — the incident transformed abstract geopolitical risk into an immediate operational reality. Financial institutions could not process transactions. Customers could not access banking apps. Businesses that had single-region deployments had no failover path.

The lesson is not to distrust AWS or any cloud provider. It is to accept that no infrastructure, however well-engineered, is beyond the reach of catastrophic failure. Disaster Recovery planning is not a reflection of distrust in your cloud provider — it is a reflection of maturity in your own risk management.

And if DR planning is the strategy, DR testing is the discipline that gives you confidence the strategy will actually work.

The Case for Regular, Rigorous DR Testing

Disaster recovery has historically been treated as a compliance checkbox. Organisations document a DR plan, conduct an annual tabletop exercise, and file it away until the next audit. The problem with this approach is that it bears no resemblance to the actual experience of a regional cloud failure.

Real DR scenarios involve cascading failures, unexpected dependencies, human coordination under pressure, and recovery steps that take far longer in practice than on paper. RTO targets that look achievable in a spreadsheet often prove wildly optimistic when an engineering team is scrambling to restore services during an actual outage.

Effective DR testing requires three things that most organisations lack:

- Realistic failure simulation: The ability to actually replicate the conditions of a regional cloud outage, not just talk through what might happen.

- End-to-end recovery validation: A structured workflow that tests not just failover, but the complete path from disaster simulation through recovery confirmation.

- Repeatable, frequent execution: DR tests should not be annual events. In a world where geopolitical risk is rising and infrastructure attacks are a documented reality, quarterly or even monthly DR validation is increasingly necessary.

However, there is a fundamental challenge that has historically limited the frequency and quality of DR testing: creating a realistic disaster scenario — such as a full region failure — in a production cloud environment is extremely complex, risky, and operationally demanding. Getting it wrong can itself cause the very outage you are preparing for.

This is precisely where purpose-built DR testing tooling becomes essential.

Enter Harness Resilience Testing: DR Testing Without the Drama

Harness has long been a leader in the chaos engineering and software delivery space. With the evolution of its platform to Harness Resilience Testing, the company has now brought together chaos engineering, load testing, and disaster recovery testing under a single, unified module — purpose-built for the kind of comprehensive resilience validation that modern organisations need.

Simulating Region Failure — Safely and Repeatably

One of the most powerful capabilities within Harness Resilience Testing is the ability to simulate an AWS region failure. Rather than requiring engineering teams to manually orchestrate complex failure conditions — or worse, waiting for a real disaster to find out what happens — Harness provides a controlled simulation environment that replicates the conditions of a full regional outage.

This means organisations can observe exactly how their systems behave when, for example, the AWS ME-CENTRAL-1 region goes offline. Which services fail? How quickly do failover mechanisms activate? Are there hidden dependencies that were not accounted for in the DR plan? Does the recovery path actually meet the RTO and RPO targets?

Harness Resilience Testing enables organisations to simulate AWS region failure scenarios in multiple ways (AZ blackhole, Bulk Node shutdows or coordinated VPC misconfigurations etc — giving engineering teams the ability to experience and validate their DR response before a real disaster strikes.

End-to-End DR Test Workflow: From Disaster to Recovery

What distinguishes Harness Resilience Testing from point solutions is its comprehensive, end-to-end DR Test workflow. The platform does not just simulate failure — it orchestrates the entire DR testing lifecycle:

- Disaster Simulation: Harness injects failure conditions that replicate real-world scenarios — including region-level AWS outages — in a controlled, configurable manner.

- Recovery Validation: The platform then validates that recovery procedures execute correctly, services restore within defined objectives, and the system reaches a healthy state.

- Observability and Reporting: Harness captures detailed metrics, failure indicators, and recovery timelines — giving teams the data they need to identify gaps and continuously improve their DR posture.

This end-to-end approach transforms DR testing from a manually intensive, high-risk activity into a structured, repeatable, and automatable workflow — one that can be run as frequently as the business requires.

Harness Resilience Testing provides DR workflows for region failures

Harness Resilience Test module provides the required chaos steps that can be pulled into the DR Test workflow to introduce a region failure.

.png)

Follow the DR test documentation here to understand how to get started with DR Test workflows.

Conclusion: Make DR Testing a Continuous Practice, Not an Annual Event

The drone strikes on AWS data centers in the Middle East on March 1, 2026 were a stark reminder that the risks facing cloud infrastructure are no longer theoretical. Geopolitical events, physical attacks, and unprecedented failure scenarios are now part of the operational reality that technology leaders must plan for — and test against.

AWS remains one of the most reliable, battle-tested cloud platforms on the planet. But reliability does not mean immunity. Even the best-engineered infrastructure can be overwhelmed by events outside its design parameters. That is not a weakness of AWS — it is a fundamental truth about the physical world in which all digital infrastructure ultimately exists.

Organisations that depend on AWS — for regional workloads, global operations, or anywhere in between — need to take a hard look at their DR readiness. Not just whether they have a plan, but whether that plan has been tested, validated, and proven to work under realistic failure conditions.

Harness Resilience Testing makes it straightforward to simulate AWS region failures and execute comprehensive end-to-end DR tests — enabling organisations to validate their recovery posture with confidence, at a frequency that matches the pace of modern risk.

With Harness, DR testing for AWS region failures is no longer a complex, resource-intensive undertaking reserved for annual compliance exercises. It becomes an efficient, repeatable, and continuously improving practice — one that can be integrated into regular engineering workflows and scaled to meet the demands of an increasingly unpredictable world.

The organisations that will emerge strongest from the next regional cloud disaster are not the ones with the best DR documents. They are the ones that have already run the test — and know exactly what to do when the alert fires.

With Harness Resilience Testing, that organisation can be yours. Book a demo with our team to explore more.

.png)

.png)

An Introduction to Disaster Recovery Testing: What You Need to Know in 2026

Businesses today run on computers, cloud systems, and digital tools. One big failure can stop everything. A cyber attack, a power outage, or a software glitch can shut down operations for hours or days. Disaster recovery testing is how you prove you can restore critical services when the unexpected happens.

In 2026, with hybrid and multi-cloud estates, distributed data, and tighter oversight, this is not a once-a-year fire drill. It is a continuous discipline that validates plans, uncovers weak links before they cause outages, and gives leaders confidence that customer-facing and internal systems can bounce back on demand.

Disaster recovery testing is a simple way to practice getting your systems back online after something goes wrong. It checks if your backup plans actually work before a real problem hits. This blog gives you a clear, step-by-step look at what it is, why it is essential right now, and how to get started.

What Is Disaster Recovery Testing?

Disaster recovery testing is a structured way to confirm that systems, data, and services can be restored to meet defined recovery goals after a disruption. The mandate is simple: verify that recovery works as designed and within the time and data loss thresholds the business requires. Effective programs test more than technology. They exercise people, processes, communications, and third-party dependencies end to end. The goal is to prove you can bring back data, apps, and services quickly with little loss.

A strong disaster recovery test plan typically covers:

- Clear recovery time objectives (RTOs) and recovery point objectives (RPOs) for each application tier.

- A current asset and application inventory with criticality tiers and upstream/downstream dependencies.

- Documented runbooks and playbooks for failover and failback, including decision criteria.

- Data protection strategies such as backups, replication, and snapshots with defined retention and immutability.

- Communication plans for internal teams, executives, customers, and partners.

- Roles and responsibilities, escalation paths, and an incident command structure.

- Third-party and vendor recovery commitments, service level agreements, and contact procedures.

- Metrics, governance, and reporting for audits and continuous improvement.

Without regular tests, even the best plan stays unproven. Many companies learn this the hard way when an outage lasts longer than expected.

Types of Disaster Recovery Tests

Different systems require different levels of validation based on their criticality, risk, and business impact. A layered testing strategy helps teams build confidence gradually starting with low-risk discussions and moving toward full-scale failovers.

By combining multiple types of tests, organizations can validate both technical recovery and team readiness without unnecessary disruption.

Tabletop Exercises:

Tabletop exercises are discussion-based sessions where stakeholders walk through a hypothetical disaster scenario step by step. These are typically the starting point for any disaster recovery program, as they help clarify roles, responsibilities, and decision-making processes. While they do not involve actual system changes, they are highly effective in identifying communication gaps and aligning teams on escalation paths.

Simulations:

Simulations introduce more realism by creating scenario-driven drills with staged alerts and mocked dependencies. Teams respond as if a real incident is happening, but without impacting production systems. This type of testing is useful for validating how teams react under pressure and ensuring that tools, alerts, and workflows function as expected in a controlled environment.

Operational Walkthroughs:

Operational walkthroughs involve executing recovery runbooks step by step to verify that all prerequisites such as permissions, tooling, and sequencing are in place. These tests are more hands-on than simulations and are often conducted before attempting partial or full failovers. They help reduce surprises by ensuring that recovery procedures are practical and executable.

Partial Failovers:

Partial failovers test the recovery of specific services, components, or regions, usually during off-peak hours. This approach allows teams to validate critical dependencies and recovery workflows without risking the entire system. It is especially useful for building confidence in complex environments where a full failover may be too risky or costly to perform frequently.

Full Failovers:

Full failovers are the most comprehensive form of disaster recovery testing, where production systems are completely switched to a secondary site or region. After validation, systems are failed back to the primary environment. These tests provide the strongest proof of resilience, as they validate end-to-end recovery, including performance and data integrity, but they require careful planning due to their potential impact.

Automated Validations:

Automated validations use codified workflows or pipelines to continuously test recovery processes. These tests can automatically spin up recovery environments, validate configurations, and run health checks. They are ideal for frequent, low-risk testing and help reduce human error while providing fast and consistent feedback. Over time, automation becomes a key driver for maintaining continuous assurance in disaster recovery readiness.

Here’s the table outlines the primary types of disaster recovery testing and where they fit.

If you are building a disaster recovery testing checklist, include a mix of these types of disaster recovery testing and map each to the systems they protect. Over time, increase the frequency of automated validations and reserve full failovers for the highest-value services.

Why Disaster Recovery Testing Matters More in 2026

The world is more connected than ever. Companies rely on cloud services, remote teams, and AI tools. At the same time, threats keep growing. Cyber attacks like ransomware are more common. Natural events and supply chain problems add extra risk. Cloud systems can fail without warning.

Recent studies show the cost of downtime keeps rising. For many large companies, one hour of downtime can cost more than 300,000 dollars. Some industries see losses climb into the millions per hour. Smaller businesses lose thousands per minute in lost sales and unhappy customers.

In 2026, experts note that most organizations still test their recovery plans only once or twice a year. That is not enough. Systems change fast. New software updates, new cloud setups, and new team members can break old plans.

Regular testing gives you confidence. It cuts recovery time and protects revenue. It also helps meet rules from banks, healthcare groups, and government agencies that require proof of preparedness.

How Modern Tools Make Disaster Recovery Testing Easier

Traditional testing took weeks of manual work. Today, platforms combine different testing methods in one place. This approach saves time and gives better results.

For example, Harness recently released its Resilience Testing module. It brings together chaos testing (to inject real-world failures safely), load testing (to check performance under stress), and disaster recovery testing. You run everything inside your existing pipelines. This means you can test recovery steps automatically, validate failovers, and spot risks early.

Teams using this kind of integrated platform report faster recovery times and fewer surprises. It fits right into daily development work instead of feeling like an extra project.

The Role of AI in Disaster Recovery Testing

Artificial intelligence is making disaster recovery testing much smarter in 2026. It turns testing from a once-a-year chore into something fast, ongoing, and more accurate.

AI helps teams spot problems early by analyzing system data and predicting where failures might happen, allowing issues to be fixed before they cause real damage. It also enables continuous and automated testing, running scenarios in the background without interrupting normal business operations. Instead of manually creating test plans, AI can generate and recommend the most relevant scenarios based on your actual system setup, saving time and improving coverage.

Another major advantage is how quickly AI can analyze results. It processes test outcomes in real time and clearly points out what needs to be fixed, removing the guesswork. Over time, it learns from every test run and continuously improves your disaster recovery strategy, making it more reliable with each iteration.

Overall, AI helps teams recover faster and with fewer mistakes. Rather than relying on assumptions, teams get clear, data-driven insights to strengthen their systems. Tools like the Resilience Testing module from Harness already bring these capabilities into practice by combining chaos testing, load testing, and disaster recovery testing. With AI built into the platform, it can recommend the right tests, automate execution, and provide simple, actionable steps to improve system resilience.

Conclusion

Disaster recovery testing is not a one-time task. It is an ongoing habit that protects your business in 2026 and beyond. The companies that test regularly recover faster, lose less money, and keep customer trust.

Take a moment now to review your current plan. Pick one critical system and schedule a simple test this quarter. If you want a modern way to make the process simple and powerful, look at solutions like the Resilience Testing module from Harness. It helps you combine multiple testing types and use AI so you stay ready no matter what comes next.

Your business depends on technology. Make sure that technology can bounce back when it counts. Start testing today and build the confidence your team needs for whatever 2026 brings.

From Chaos to Confidence: Debunking the 3 Biggest Myths of Chaos Engineering

Many organizations hesitate to adopt chaos engineering because of persistent misconceptions that make it seem reckless or reserved for tech giants.

But the reality is far more practical and far more accessible.

Drawing from experience building the chaos engineering program at Target.com, Matt Schillerstrom breaks down the three biggest myths holding teams back and what is actually true.

Myth 1: Chaos Engineering Means Random Failure

The fear is understandable. Engineers unplugging servers, triggering outages, and hoping for the best.

The Reality: Chaos engineering is not random. It is disciplined, which helps teams build trust and confidence in their systems.

It is built on hypothesis-driven experimentation. Every test starts with a clear expectation: what should happen if this component fails?

Instead of breaking things randomly, teams run controlled experiments. For example, stopping one out of ten servers to observe how the system adapts. These scenarios are planned, reviewed, and executed with intention.

At Target, when Matt was working with engineering teams, they would learn something before running a test by getting the whole team aligned on the experiment's hypothesis. It would require teams to review their architecture diagrams, documentation, and runbooks, often revealing issues before a test was started.

The goal is not disruption. The goal is learning.

Today, teams are taking this further with AI, automatically identifying resilience risks and generating experiments before issues reach production.

Read how this works in practice: AI-Powered Resilience Testing with Harness MCP Server and Windsurf

Myth 2: It’s Only for FAANG Companies

Chaos engineering is often associated with Netflix, Google, and other hyperscalers. That makes it feel out of reach.

The Reality: You do not need massive scale to get meaningful value.

You can start small today.

A simple experiment, such as increasing memory utilization on a single service, can reveal whether your auto-scaling actually works. These small tests validate that the resilience mechanisms you are using will function when issues happen, rather than having your customers impacted.

What matters is not scale. What matters is consistency and learning how your system behaves under stress.

Myth 3: Chaos Engineering Replaces Traditional Testing

Some teams worry that adopting chaos engineering means replacing QA or existing testing workflows.

The Reality: Chaos engineering strengthens what you already do.

At Target, chaos experiments were layered into monthly load testing. While simulating peak traffic, failure scenarios such as payment authorization latency were introduced to observe system behavior under real pressure.

This approach does not replace testing. It makes it more realistic and more valuable.

Build Confidence, Not Chaos

Chaos engineering is not about breaking systems. It is about understanding them.

When teams move from ad hoc testing to small, continuous, hypothesis-driven experiments, they gain something far more valuable than test results. They gain confidence.

Confidence that their systems will behave as expected.

Confidence that failures will not become outages.

Confidence that they are ready for the unexpected.

See It in Action

If you are thinking about chaos engineering, the best way to understand it is to start.

Harness helps teams safely design, run, and learn from controlled chaos experiments without putting production at risk.

Want to try your first chaos engineering test? Sign up for your free Resilience Testing account today. Prefer a hands-on demo with an expert? Click here for a personalized demo.

Load Testing: An Essential Guide for 2026

In today's always-on digital economy, a single slow page or unexpected crash during peak traffic can cost businesses thousands or even millions of dollars in lost revenue, damaged reputation, and frustrated customers. Imagine Black Friday shoppers abandoning carts because your e-commerce site buckles under load, or a SaaS platform going down during a major product launch. This is where load testing becomes non-negotiable.

Load testing simulates real-world user traffic to ensure your applications, websites, and APIs stay fast, stable, and scalable. It's a cornerstone of performance testing that helps teams catch bottlenecks early, validate SLAs, and build resilient systems.

If you're searching for a complete load testing guide, what is load testing, or how to perform load testing, you're in the right place. This beginner-friendly introduction covers everything from the basics to best practices, with practical steps anyone can follow.

What Is Load Testing?

Load testing is a type of performance testing that evaluates how your system behaves under expected (and sometimes peak) user loads. It simulates concurrent users, requests, or transactions to measure key metrics such as Response times (average, p95, p99), Throughput (requests per second), Error rates, Resource utilization (CPU, memory, database connections), Latency and scalability.

Unlike unit or functional tests that check "does it work?", load testing answers: "How does it perform when 1,000 (or 100,000) people use it at once?"

Done early and often, load testing reduces risk across the lifecycle. It confirms capacity assumptions, reveals infrastructure limits, and proves that recent changes haven’t slowed critical paths. The result is fewer production incidents and fewer late-night fire drills.

Key terminology to anchor your approach:

- Response time: End-to-end time to complete a request.

- Latency: The network delay portion of response time.

- Throughput: Requests or transactions per second.

- Concurrency: The number of simultaneous users or sessions.

- Virtual users: Emulated users that generate traffic.

- Think time: Pauses between actions to mimic real behavior.

- Error rate: Percentage of requests that fail.

- Saturation point: Load level where performance drops sharply.

- Service-level objective (SLO): A target like p95 response time under 500 ms.

Why Load Testing Matters?

Effective load testing quantifies capacity, validates autoscaling, and uncovers issues like thread pool starvation, database contention, cache thrash, and third-party limits. With data in hand, you can tune connection pools, garbage collection, caching tiers, and CDN strategies so the app stays fast when it counts.

Skipping load testing is like launching a rocket without wind-tunnel tests, risky and expensive. Here's why it's essential:

- Prevents costly downtime: Unplanned outages average $5,600–$14,000+ per minute for enterprises, with some large companies facing $1M+ per hour.

- Improves user experience and conversions: Even 100ms of added latency can reduce sales by 1% and one-second delays can drop conversions by 7% for businesses.

- Validates scalability and auto-scaling: Especially critical for cloud-native apps on Kubernetes or AWS.

- Saves money long-term: Catch issues in staging instead of production; reduce infrastructure over-provisioning.

- Boosts confidence for high-traffic events: Product launches, sales, seasonal peaks.

Investing in load testing upfront keeps teams focused on building, not firefighting. Many major outages (think major retailers or banking apps) trace back to untested load scenarios. Load testing helps you ship with confidence.

Types of Load Testing

Not all traffic patterns are the same, and your system shouldn’t be tested with a one-size-fits-all approach. Different load testing scenarios help you understand how your application behaves under various real-world conditions, from everyday usage to extreme, unpredictable events.

- Baseline testing - This scenario tests your system under normal, expected load conditions. It helps establish a performance benchmark so you can compare how the system behaves under higher stress levels later.

- Ramp-up / Steady-state testing - In this approach, the load is gradually increased until it reaches a peak and is then maintained for a period of time. This helps you observe how your system handles growth in traffic and whether it can sustain peak load consistently.

- Stress testing - In this type of testing you push a system beyond its limits to see what happens when it starts to fail. In simple words, you keep increasing users or traffic until the system can’t handle it anymore.

- Spike testing - Spike testing simulates sudden and extreme increases in traffic over a short period. It is useful for understanding how your system reacts to unexpected surges, such as flash sales or viral events.

- Soak / Endurance testing - This type of testing runs the system under a steady load for an extended duration. It helps uncover issues like memory leaks, resource exhaustion, or performance degradation over time.

- Scalability testing: Validates scale-up and scale-out plans. Confirms that larger or additional instances deliver predictable gains and that autoscaling triggers map to realistic signals.

- Combined testing (Load + Chaos) - Here, load testing is combined with chaos engineering practices, such as injecting failures like network latency or pod crashes during high traffic. This helps evaluate how resilient your system is under both stress and failure conditions.

How to Perform Load Testing?

Load testing isn’t just about throwing traffic at your system, it’s about understanding how your application behaves under real-world conditions and uncovering hidden bottlenecks before your users do.

Here's a step-by-step guide to do load testing:

- Define objectives and scope - Start by clearly defining what success looks like for your system. Identify your SLAs, expected concurrent users, peak traffic events, and the most critical user journeys like login, checkout, or key API calls.

- Identify test scenarios -Use real production data such as analytics and logs to design realistic test scenarios. Make sure to include both common user flows (happy paths) and edge cases that could potentially break the system.

- Set up test environment- Create a test environment that closely mirrors production in terms of infrastructure, data volume, and network conditions.

- Create scripts - Develop scripts that simulate real user behavior using load testing tools. Incorporate think times, random delays, and varied actions to mimic how actual users interact with your system.

- Configure load profile - Define how the load will be applied, gradually ramp up users, maintain a steady load, and then ramp down. This helps you observe how the system behaves under increasing and sustained pressure.

- Execute the test - Run your tests in a controlled, non-production environment first to avoid impacting real users. Monitor system performance in real-time to catch any immediate issues.

- Analyze results - Dive into the results using logs, dashboards, and APM tools to identify performance bottlenecks. Focus on metrics like response time, error rates, and system throughput.

- Iterate and report - Fix the issues identified during testing and re-run tests to validate improvements. Document your findings and share insights with stakeholders for better decision-making.

- Integrate into CI/CD pipelines - Automate load testing by integrating it into your CI/CD pipelines. This ensures performance is continuously validated with every build or deployment.

Load testing is an iterative process, not a one-time activity. The more consistently you test and refine, the more resilient and reliable your system becomes over time.

Role of AI in load testing

Moving into 2026 and beyond, AI is shifting load testing from a manual, scheduled chore into an intelligent, autonomous process. Instead of relying on static scripts, AI agents now ingest vast streams of real-world data including recent incident reports, deployment logs, and even design changes documented in wikis to generate context-sensitive testing scenarios. This ensures that performance suites are no longer generic; they are hyper-targeted to the specific risks introduced by the latest code commits or environmental shifts, allowing teams to catch bottlenecks before they ever reach production.

The relationship between testing and infrastructure has also become a two-way street. Beyond just identifying breaking points, AI-driven analysis of load test results now provides proactive recommendations for deployment configurations. By correlating performance metrics with resource allocation, these systems can suggest the "golden path" for auto-scaling thresholds, memory limits, and container orchestration. This creates a continuous feedback loop where the load test doesn't just pass or fail it actively optimizes the production environment for peak efficiency.

Load testing for AI Agents

In the new landscape of AI agents proliferation, load testing is no longer just about hitting a server with traffic it's about managing the explosion of agentic orchestration. With organizations deploying hundreds of specialized AI agents, a single user request can trigger a "storm" of inter-agent communication, where one agent's output becomes another's prompt. Traditional load tests fail here because they can't predict these emergent behaviors or the cascading latency that occurs when multiple agents reason, call external APIs, and update shared memory simultaneously. Testing must now account for "prompt bloat" and context contamination, where excessive or conflicting data fed into these agent chains causes performance to degrade or costs to spike unexpectedly.

To survive this complexity, performance engineering in 2026 has shifted toward dynamic environment testing and automated "prompt volume" estimation. Load testers are now using tools like AI Gateways to monitor and rate-limit the massive volume of prompts moving between agents, ensuring that "reasoning loops" don't turn into infinite, resource-draining cycles. By simulating thousands of parallel agent trajectories in virtual sandboxes, teams can identify the specific point where a flurry of prompts causes an LLM's context window to "clash," leading to the 30–40% drops in accuracy often seen under heavy organizational load.

Popular Load Testing Tools

When selecting a load testing tool, teams often start with open-source options for flexibility and cost, then move to enterprise or cloud-managed solutions for scale, collaboration, and integrations.

Here are some of the most popular and widely used load testing tools in 2026:

- Open-source/free: Apache JMeter, k6 (Grafana), Gatling, Locust (Python-based).

- Enterprise: Harness, LoadRunner, NeoLoad, BlazeMeter, LoadNinja.

Choose based on scripting language, scale needs, and integration. For teams already invested in Locust or seeking to combine load testing with chaos engineering in CI/CD pipelines, platforms like Harness Resilience Testing provide seamless native support to elevate your testing strategy.

Load Testing Best Practices in 2026

As systems grow more distributed and user expectations continue to rise, load testing in 2026 is no longer optional, it’s a continuous discipline. Following the right best practices ensures that your application is not just fast, but also resilient and reliable under real-world conditions.

- Test early and often (shift-left) - Start load testing during the development phase instead of waiting until pre-release. This will help you to catch performance issues early when they are easier and cheaper to fix.

- Use realistic data and traffic models - Base your tests on actual production data, analytics, and user behavior patterns. This ensures your test scenarios closely reflect how users interact with your system in reality.

- Match production environments - Ensure your testing environment mirrors production as closely as possible in terms of configurations, data volume, and scaling policies. This improves the accuracy and reliability of your test results.

- Focus on user journeys - Instead of only testing raw request throughput, simulate complete user workflows like login, search, or checkout.

- Monitor golden signals - Track key performance indicators such as latency, traffic, error rates, and system saturation. These “golden signals” help you quickly identify and diagnose performance issues.

- Automate wisely - Keep smoke-level checks in CI to catch obvious regressions. Reserve heavier runs for staging or pre-production where you can mirror production closely.

- Combine with chaos engineering - Introduce controlled failures during load testing, such as network delays or service disruptions. This helps evaluate how well your system performs under both stress and failure conditions.

Adopting these best practices helps you move beyond basic performance testing toward building truly resilient systems. In 2026, it’s not just about handling traffic, it’s about thriving under pressure.

Conclusion

Load testing turns unknowns into knowns and panic into process. It isn't a "nice-to-have", it's essential for delivering fast, reliable digital experiences that customers (and your bottom line) demand.

By following this guide, you'll identify issues early, optimize performance, and build systems that scale confidently.

Ship faster, break less, and stay resilient.

.png)

.png)

Resilience Testing Is Non-Negotiable in the Enterprise SDLC

Modern software delivery has dramatically accelerated. AI-assisted development, automated CI/CD pipelines, and cloud-native architectures have made it possible for teams to deploy software dozens of times per day.

But speed alone does not guarantee reliability.

At Conf42 Site Reliability Engineering (SRE) 2026, Uma Mukkara, Head of Resilience Testing at Harness and co-creator of LitmusChaos, delivered a clear message: outages are inevitable. In modern distributed systems, assuming your design will always work is not just optimistic—it’s risky.

In fact, as Uma put it, failure in distributed systems is a mathematical certainty.

That’s why resilience testing must become a core, continuous practice in the Software Development Life Cycle (SDLC).

The Reality of Inevitable Outages

Even the most reliable cloud providers experience outages.

Uma illustrated this with examples that highlight how unpredictable failures can be:

- Physical disruption such as drone strikes affecting AWS Middle East data centers

- Policy or configuration errors that triggered cascading outages on cloud platforms like Azure

- Retry storms and load spikes where services collapse under unexpected demand

These incidents demonstrate an important reality: the types of failures constantly evolve.

A system validated during design may not be resilient against tomorrow’s failure scenarios. Architecture may stay the same, but the failure patterns surrounding it continuously change.

This is why resilience cannot rely on assumptions.

Hope is not a strategy—verification is.

For a deeper look at this broader approach to resilience, see how chaos engineering, load testing, and disaster recovery testing work together.

What Resilience Really Means

Resilience is often misunderstood as simply keeping systems online.

But uptime alone does not make a system resilient.

Uma defines resilience more precisely:

Resilience is the grace with which systems handle failure and return to an active state.

In practice, a resilient system must handle three categories of disruption:

1. System Failures

Pod crashes, node failures, infrastructure disruptions, or network faults.

2. Load Conditions

Traffic spikes or sudden demand that pushes systems to their limits.

3. Disasters

Regional outages, multi-AZ failures, or infrastructure loss that require recovery mechanisms.

If teams test only one of these dimensions, they leave significant risks undiscovered.

True resilience requires verifying how systems behave across all three scenarios.

Continuous Verification in the SDLC

One of the biggest challenges Uma highlighted is how organizations treat resilience.

Many teams still see it as a “day-two problem”—something SREs will handle after systems are deployed.

Others assume that once resilience has been validated during system design, the problem is solved.

In reality, resilience must be continuously verified.

As systems evolve with each release, so do their failure modes. The most effective strategy is to:

- Test resilience continuously

- Verify resilience with every delivery

- Document results across releases

This approach shifts resilience testing into the outer loop of the SDLC, alongside functional and performance testing.

Instead of waiting for production incidents, teams proactively identify weaknesses before customers experience them.

Understanding Resilience Debt

Uma introduced an important concept: resilience debt.

Resilience debt is similar to technical debt. When teams postpone resilience validation, they leave hidden risks unresolved in the system.

Over time, that debt accumulates.

And when failure eventually occurs—which it inevitably will—the business impact grows proportionally to the resilience debt that was ignored.

The only way to reduce this risk is to steadily increase resilience testing coverage over time.

As testing matures across multiple quarters, organizations gain better feedback about system behavior, uncover more risks earlier, and continuously reduce the likelihood of severe outages.

A Holistic Approach to Resilience Testing

Another key takeaway from Uma’s session is that resilience testing should not happen in silos.

Many organizations treat chaos testing, load testing, and disaster recovery validation as separate initiatives owned by different teams.

But the most meaningful risks often appear when these scenarios intersect.

For example:

- A resource bottleneck might only appear when high traffic coincides with a service failure.

- Chaos experiments developed for reliability testing can also be reused in disaster recovery workflows.

- Combining chaos and load tests helps teams observe system behavior at failure limits under real-world conditions.

That’s why resilience testing must be approached as a holistic practice combining:

- Chaos Engineering

- Load Testing

- Disaster Recovery (DR) Validation

You can explore the fundamentals of resilience testing in the Harness documentation.

Collaboration Across Teams

Resilience testing also requires collaboration across multiple roles.

Developers, QA engineers, SREs, and platform teams all contribute to validating system reliability.

Uma pointed out that many organizations already share infrastructure for testing but run different experiments independently. By coordinating these efforts, teams can:

- reuse testing environments

- share chaos experiments across testing scenarios

- validate DR workflows more frequently

- improve testing efficiency across teams

Resilience becomes significantly stronger when personas, environments, and test assets are shared rather than siloed.

The Role of AI in Resilience Testing

As systems become more complex, another challenge emerges: knowing what to test and when.

Large organizations may have hundreds of potential experiments, making it difficult to prioritize testing effectively.

Uma described how agentic AI systems can help address this challenge.

By analyzing internal knowledge sources such as:

- incident data

- CI/CD pipeline history

- infrastructure configuration

- operational documentation

AI systems can recommend:

- the most relevant chaos experiments

- appropriate load testing scenarios

- disaster recovery tests that should run at a given time

These recommendations allow teams to run the right tests at the right moment, improving resilience coverage without overwhelming engineering teams.

A Unified Platform for Resilience Testing

To support this holistic approach, Harness has expanded its original Chaos Engineering capabilities into a broader platform: Harness Resilience Testing.

The platform integrates multiple testing disciplines in a single environment, enabling teams to:

- design chaos experiments

- run load tests

- validate disaster recovery workflows

- observe system risk patterns in one place

By combining these capabilities, teams gain a single pane of glass for identifying resilience risks across the SDLC.

This unified view allows organizations to track trends in system reliability and proactively address weaknesses before they turn into production incidents.

Resilience Is a Core Practice for Modern SRE Teams

Uma closed the session with a clear conclusion.Resilience testing is not optional.

Outages will happen. Infrastructure will fail. Traffic patterns will change. Dependencies will break.

What matters is whether organizations have continuously validated how their systems behave when those failures occur.

The more resilience testing coverage teams build over time, the more feedback they receive—and the lower the potential business impact becomes.

In modern software delivery, resilience is no longer just a reliability practice.

It is a core discipline of the enterprise SDLC.

Ready to start validating your system’s resilience?

Explore Harness Resilience Testing and start validating reliability across your SDLC.

From Chaos Engineering to Resilience Testing: Why We’re Expanding How Teams Validate Reliability

At Harness, we’re committed to helping teams build and deliver software that doesn’t just work – it thrives under pressure, scales reliably, and recovers swiftly from the unexpected. Today, we’re taking the next step in that mission by evolving our Chaos Engineering module into Resilience Testing.

This evolution reflects how reliability is tested in practice today. While Chaos Engineering has long been a powerful way to proactively identify weaknesses through controlled fault injection, many teams – SREs, platform engineers, performance specialists, and DevOps leaders – are already validating resilience across the same workflows:

- How systems behave when dependencies fail

- How services perform under sustained load

- How infrastructure and applications recover during real outages

Resilience Testing brings these efforts together into a single, continuous approach.

Built On Open Source and Real Systems

My work in Chaos Engineering started with a simple goal: make resilience testing practical for real-world systems. Before that, I spent years building foundational cloud-native infrastructure at places like CloudByte and MayaData, and I kept coming back to the same lesson: you learn fastest when you build in the open and stay close to production users.

Before joining Harness, my team and I created LitmusChaos to help teams running Kubernetes understand how their systems actually behave under failure. What began as an open source project grew into one of the most widely adopted chaos engineering projects in the CNCF, used by organizations testing real production environments.

When Harness acquired Chaos Native in 2022, it was clear we shared the same belief: chaos engineering shouldn’t be a standalone activity. It belongs inside the software delivery lifecycle. We then donated LitmusChaos to the CNCF, and Harness continues to actively maintain and contribute to the project today.

That combination of open source leadership and enterprise integration has directly shaped how chaos engineering evolved inside Harness.

How Chaos Engineering Expanded in Practice

Over the past four years, teams using Chaos Engineering pushed beyond isolated experiments toward broader resilience workflows.

What mattered most wasn’t injecting failures – it was understanding what to test, when to test, and how to learn continuously. That led to deeper capabilities around service and dependency discovery, targeted risk testing, monitoring-driven validation, automated gamedays, and AI-assisted recommendations.

As software delivery has become more automated and increasingly AI-assisted, these same principles naturally extended beyond chaos engineering alone.

Introducing Resilience Testing

Today, we’re launching Resilience Testing, with new Load Testing and Disaster Recovery Testing capabilities built on top of our Chaos Engineering foundation.

Resilience Testing brings together three core areas:

- Chaos Engineering to validate failure handling and recovery

- Load Testing to understand behavior under scale and stress

- Disaster Recovery Testing to prove readiness for real outages

These capabilities are unified through automation and AI-driven insights, helping teams prioritize risk, improve coverage, and continuously validate resilience as systems evolve.

Chaos Eengineering gave us a strong foundation, and Resilience Testing is the broader practice teams have been building toward as systems and workflows evolve.

A Milestone Shaped By Community

This evolution follows years of collaboration with the broader resilience engineering community, including Chaos Carnival, now in its sixth year, which brings together thousands of engineers sharing real lessons from production systems.

As systems grow more dynamic and AI-driven, resilience testing must move beyond periodic checks toward continuous, intelligent validation. Resilience Testing is designed for that reality, and it reflects what we’ve learned building, operating, and scaling real systems over time.

Ready to expand beyond chaos experiments? Talk to your Harness representative to enable the new capabilities, or book a demo with our team to explore the right rollout for your environment.

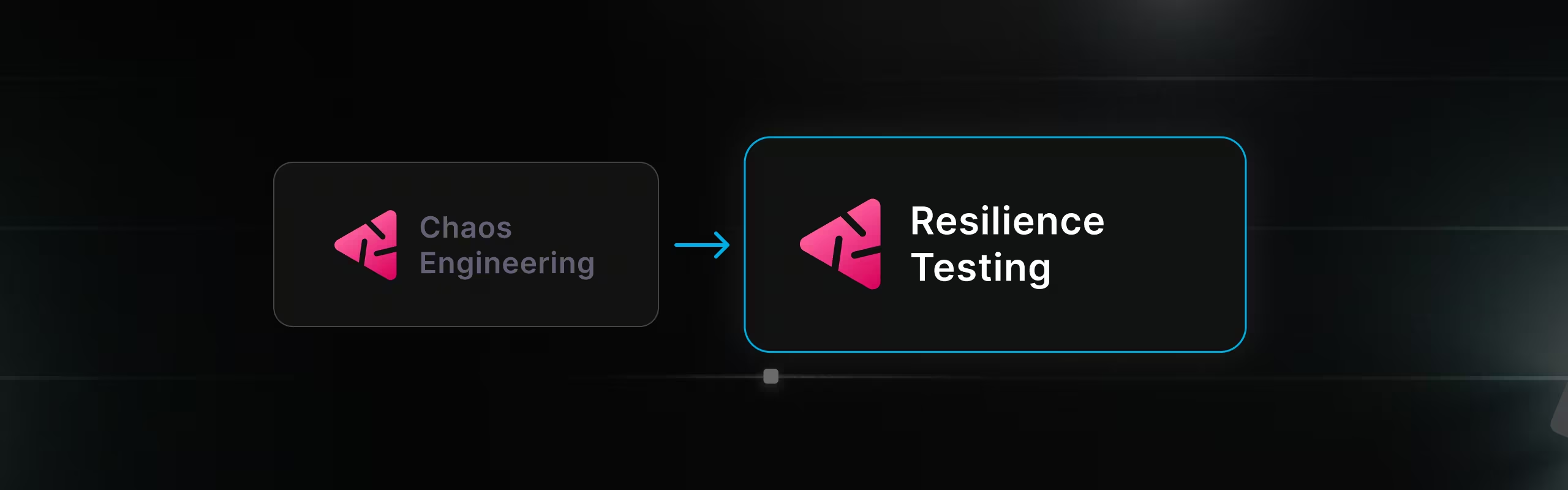

Theory to Turbulence: Building a Developer-Friendly E2E Testing Framework for Chaos Platform

As an enterprise chaos engineering platform vendor, validating chaos faults is not optional — it’s foundational. Every fault we ship must behave predictably, fail safely, and produce measurable impact across real-world environments.

When we began building our end-to-end (E2E) testing framework, we quickly ran into a familiar problem: the barrier to entry was painfully high.

Running even a single test required a long and fragile setup process:

- Installing multiple dependencies by hand

- Configuring a maze of environment variables

- Writing YAML-based chaos experiments manually

- Debugging cryptic validation failures

- Only then… executing the first test

This approach slowed feedback loops, discouraged adoption, and made iterative testing expensive — exactly the opposite of what chaos engineering should enable.

The Solution: A Simplified Chaos Fault Validation Framework

To solve this, we built a comprehensive yet developer-friendly E2E testing framework for chaos fault validation. The goal was simple: reduce setup friction without sacrificing control or correctness.

The result is a framework that offers:

- An API-driven execution model instead of manual YAML wiring

- Real-time log streaming for faster debugging and observability

- Intelligent target discovery to eliminate repetitive configuration

- Dual-phase validation to verify both fault injection and system impact

What previously took 30 minutes (or more) to set up and run can now be executed in under 5 minutes — consistently and at scale.

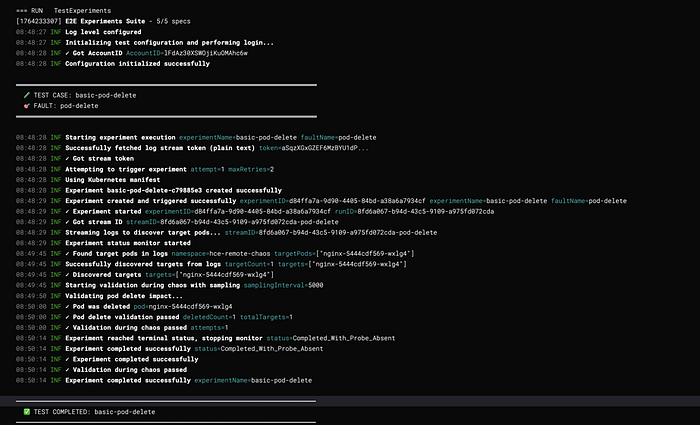

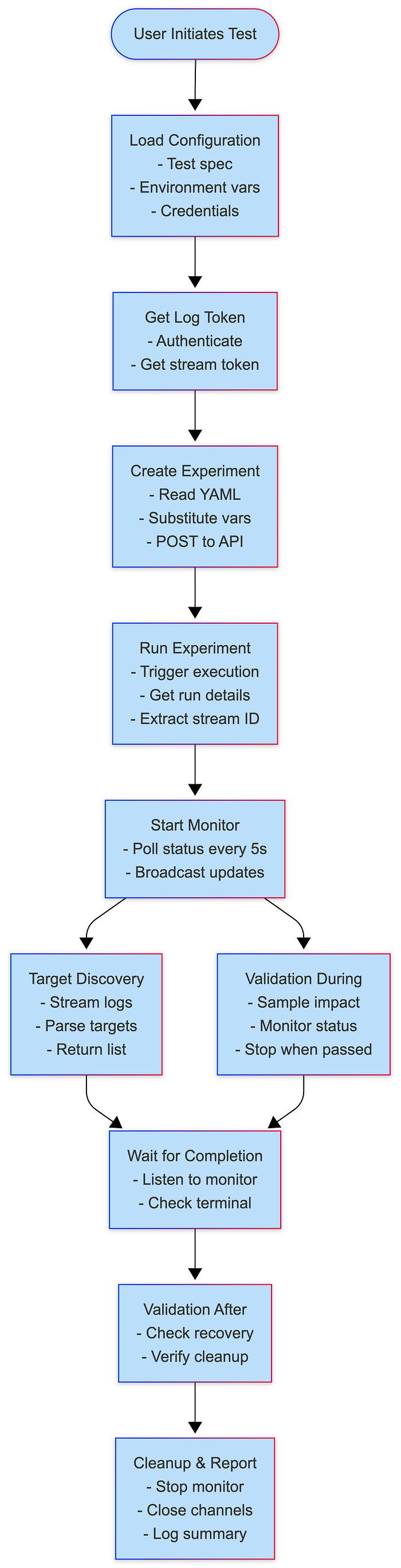

System Architecture

High-Level Architecture

Layer Responsibilities

Core Components

1. Experiment Runner

Purpose: Orchestrates the complete chaos experiment lifecycle from creation to validation.

Key Responsibilities:

- Experiment creation with variable substitution

- Log streaming and target discovery

- Concurrent validation management

- Status monitoring and completion detection

- Error handling and retry logic

Architecture Pattern: Template Method + Observer

type ExperimentRunner struct {

identifiers utils.Identifiers

config ExperimentConfig

}

type ExperimentConfig struct {

Name string

FaultName string

ExperimentYAML string

InfraID string

InfraType string

TargetNamespace string

TargetLabel string

TargetKind string

FaultEnv map[string]string

Timeout time.Duration

SkipTargetDiscovery bool

ValidationDuringChaos ValidationFunc

ValidationAfterChaos ValidationFunc

SamplingInterval time.Duration

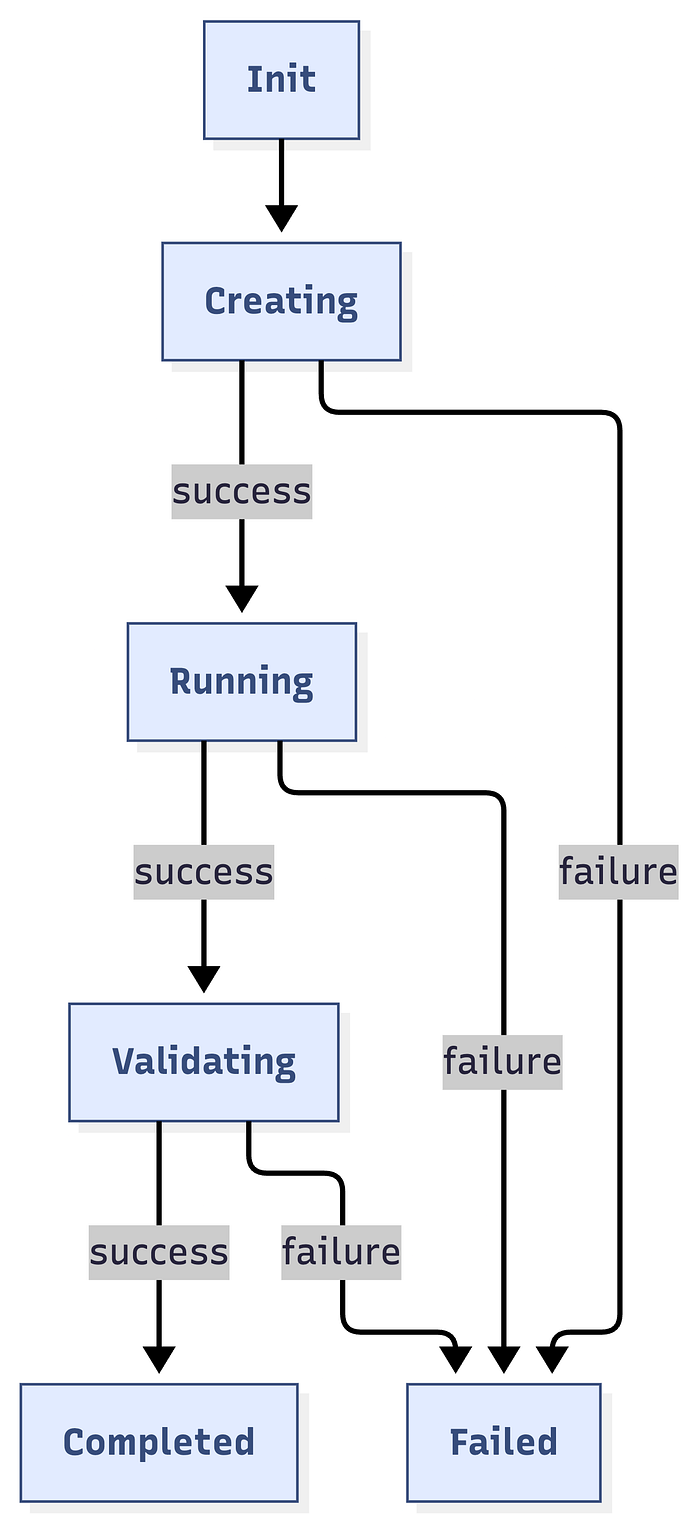

}Execution Flow:

Run() →

1. getLogToken()

2. triggerExperimentWithRetry()

3. Start experimentMonitor

4. extractStreamID()

5. getTargetsFromLogs()

6. runValidationDuringChaos() [parallel]

7. waitForCompletion()

8. Validate ValidationAfterChaos2. Experiment Monitor

Purpose: Centralized experiment status tracking with publish-subscribe pattern.

Architecture Pattern: Observer Pattern

type experimentMonitor struct {

experimentID string

runResp *experiments.ExperimentRunResponse

identifiers utils.Identifiers

stopChan chan bool

statusChan chan string

subscribers []chan string

}Key Methods:

start(): Begin monitoring (go-routine)subscribe(): Create subscriber channelbroadcast(status): Notify all subscribersstop(): Signal monitoring to stop

Benefits:

- 80% reduction in API calls

- 92% faster failure detection

- Single source of truth

- Easy to add new consumers

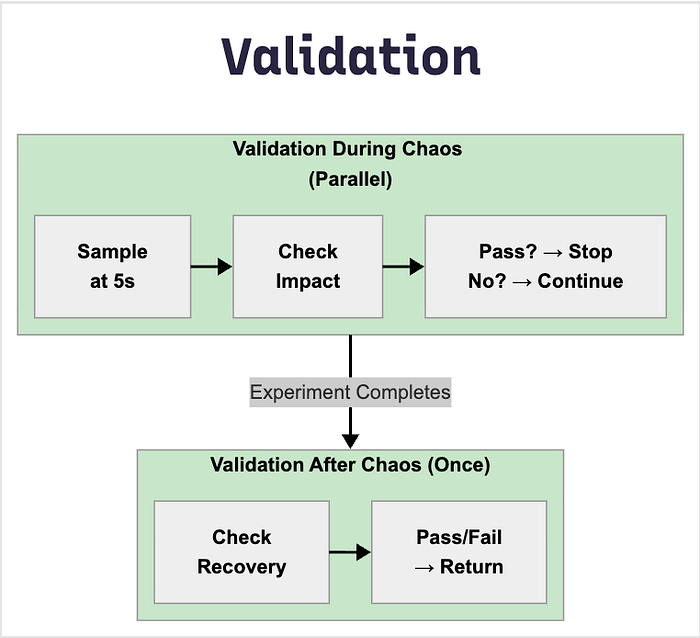

3. Validation Framework

Purpose: Dual-phase validation system for concrete chaos impact verification.

ValidationDuringChaos

- Runs in parallel during experiment

- Continuous sampling at configurable intervals

- Stops when validation passes

- Use case: Verify active fault impact

ValidationAfterChaos

- Runs once after experiment completes

- Single execution for final state

- Use case: Verify recovery and cleanup

Function Signature:

type ValidationFunc func(targets []string, namespace string) (bool, error)

// Returns: (passed bool, error)Sample Validation Categories:

Experiment Execution Engine

Execution Phases

Phase 1: Setup

├─ Load configuration

├─ Authenticate with API

└─ Validate environment

Phase 2: Preparation

├─ Get log stream token

├─ Resolve experiment YAML path

├─ Substitute template variables

└─ Create experiment via API

Phase 3: Execution

├─ Trigger experiment run

├─ Start status monitor

├─ Extract stream ID

└─ Discover targets from logs

Phase 4: Validation (Concurrent)

├─ Validation During Chaos (parallel)

│ ├─ Sample at intervals

│ ├─ Check fault impact

│ └─ Stop when passed/completed

└─ Wait for completion

Phase 5: Post-Validation

├─ Validation After Chaos

├─ Check recovery

└─ Final assertions

Phase 6: Cleanup

├─ Stop monitor

├─ Close channels

└─ Log resultsState Machine

Concurrency Model

Main Thread:

├─ Create experiment

├─ Start monitor goroutine

├─ Start target discovery goroutine

├─ Start validation goroutine [if provided]

└─ Wait for completion

Monitor Goroutine:

├─ Poll status every 5s

├─ Broadcast to subscribers

└─ Stop on terminal status

Target Discovery Goroutine:

├─ Subscribe to monitor

├─ Poll for targets every 5s

├─ Listen for failures

└─ Return when found or failed

Validation Goroutine:

├─ Subscribe to monitor

├─ Run validation at intervals

├─ Listen for completion

└─ Stop when passed or completedAPI Integration Layer

API Client Architecture

Variable Substitution System

Template Format: {{ VARIABLE_NAME }}

Built-in Variables:

INFRA_NAMESPACE // Infrastructure namespace

FAULT_INFRA_ID // Infrastructure ID (without env prefix)

EXPERIMENT_INFRA_ID // Full infrastructure ID (env/infra)

TARGET_WORKLOAD_KIND // deployment, statefulset, daemonset

TARGET_WORKLOAD_NAMESPACE // Target namespace

TARGET_WORKLOAD_NAMES // Specific workload names (or empty)

TARGET_WORKLOAD_LABELS // Label selector

EXPERIMENT_NAME // Experiment name

FAULT_NAME // Fault type

TOTAL_CHAOS_DURATION // Duration in seconds

CHAOS_INTERVAL // Interval between chaos actions

ADDITIONAL_ENV_VARS // Fault-specific environment variablesCustom Variables: Passed via FaultEnv map in ExperimentConfig.

Validation Framework

Architecture

Validation Categories

1. Resource Validators

ValidatePodCPUStress(targets, namespace) (bool, error)

ValidatePodMemoryStress(targets, namespace) (bool, error)

ValidateDiskFill(targets, namespace) (bool, error)

ValidateIOStress(targets, namespace) (bool, error)Detection Logic:

- CPU: Usage > baseline + 30%

- Memory: Usage > baseline + 20%

- Disk: Usage > 80%

- I/O: Read/write operations elevated

2. Network Validators

ValidateNetworkLatency(targets, namespace) (bool, error)

ValidateNetworkLoss(targets, namespace) (bool, error)

ValidateNetworkCorruption(targets, namespace) (bool, error)Detection Methods:

- Ping latency measurements

- Packet loss percentage

- Checksum errors

3. Pod Lifecycle Validators

ValidatePodDelete(targets, namespace) (bool, error)

ValidatePodRestarted(targets, namespace) (bool, error)

ValidatePodsRunning(targets, namespace) (bool, error)Verification:

- Pod age comparison

- Restart count increase

- Ready status check

4. Application Validators

ValidateAPIBlock(targets, namespace) (bool, error)

ValidateAPILatency(targets, namespace) (bool, error)

ValidateAPIStatusCode(targets, namespace) (bool, error)

ValidateFunctionError(targets, namespace) (bool, error)5. Redis Validators

ValidateRedisCacheLimit(targets, namespace) (bool, error)

ValidateRedisCachePenetration(targets, namespace) (bool, error)

ValidateRedisCacheExpire(targets, namespace) (bool, error)Direct Validation: Executes redis-cli INFO in pod, parses metrics

Validation Best Practices

Data Flow & Lifecycle

Complete Experiment Lifecycle

Data Structures Flow

// Input

ExperimentConfig

↓

// API Creation

ExperimentPayload (JSON)

↓

// API Response

ExperimentResponse {ExperimentID, Name}

↓

// Run Request

ExperimentRunRequest {NotifyID}

↓

// Run Response

ExperimentRunResponse {ExperimentRunID, Status, Nodes}

↓

// Log Streaming

StreamToken + StreamID

↓

// Target Discovery

[]string (target pod names)

↓

// Validation

ValidationFunc(targets, namespace) → (bool, error)

↓

// Final Result

Test Pass/Fail with error detailsPerformance & Scalability

Performance Metrics

Concurrent Test Execution

- Each test gets isolated namespace

- Separate experiment instances

- No shared state between tests

- Parallel execution supported

Example Usage of Framework

RunExperiment(ExperimentConfig{

Name: "CPU Stress Test",

FaultName: "pod-cpu-hog",

InfraID: infraID,

ProjectID: projectId,

TargetNamespace: targetNamespace,

TargetLabel: "app=nginx", // Customize based on your test app

TargetKind: "deployment",

FaultEnv: map[string]string{

"CPU_CORES": "1",

"TOTAL_CHAOS_DURATION": "60",

"PODS_AFFECTED_PERC": "100",

"RAMP_TIME": "0",

},

Timeout: timeout,

SamplingInterval: 5 * time.Second, // Check every 5 seconds during chaos

// Verify CPU is stressed during chaos

ValidationDuringChaos: func(targets []string, namespace string) (bool, error) {

clientset, err := faultcommon.GetKubeClient()

if err != nil {

return false, err

}

return validations.ValidatePodCPUStress(clientset, targets, namespace)

},

// Verify pods recovered after chaos

ValidationAfterChaos: func(targets []string, namespace string) (bool,error) {

clientset, err := faultcommon.GetKubeClient()

if err != nil {

return false, err

}

return validations.ValidateTargetAppsHealthy(clientset, targets, namespace)

},

})Knowledge Sharing and Learning